Talking to yourself used to be a strictly private pastime. That’s no longer the case – researchers have eavesdropped on our internal monologue for the first time. The achievement is a step towards helping people who cannot physically speak communicate with the outside world.

“If you’re reading text in a newspaper or a book, you hear a voice in your own head,” says Brian Pasley at the University of California, Berkeley. “We’re trying to decode the brain activity related to that voice to create a medical prosthesis that can allow someone who is paralysed or locked in to speak.”

When you hear someone speak, sound waves activate sensory neurons in your inner ear. These neurons pass information to areas of the brain where different aspects of the sound are extracted and interpreted as words.

In a previous study, Pasley and his colleagues recorded brain activity in people who already had electrodes implanted in their brain to treat epilepsy, while they listened to speech. The team found that certain neurons in the brain’s temporal lobe were only active in response to certain aspects of sound, such as a specific frequency. One set of neurons might only react to sound waves that had a frequency of 1000 hertz, for example, while another set only cares about those at 2000 hertz. Armed with this knowledge, the team built an algorithm that could decode the words heard based on neural activity alone (PLoS Biology, doi.org/fzv269).

The team hypothesised that hearing speech and thinking to oneself might spark some of the same neural signatures in the brain. They supposed that an algorithm trained to identify speech heard out loud might also be able to identify words that are thought.

Mind-reading

To test the idea, they recorded brain activity in another seven people undergoing epilepsy surgery, while they looked at a screen that displayed text from either the Gettysburg Address, John F. Kennedy’s inaugural address or the nursery rhyme Humpty Dumpty.

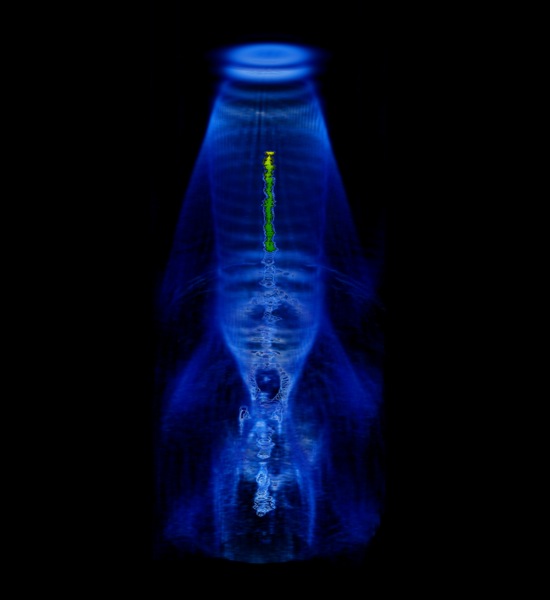

Each participant was asked to read the text aloud, read it silently in their head and then do nothing. While they read the text out loud, the team worked out which neurons were reacting to what aspects of speech and generated a personalised decoder to interpret this information. The decoder was used to create a spectrogram – a visual representation of the different frequencies of sound waves heard over time. As each frequency correlates to specific sounds in each word spoken, the spectrogram can be used to recreate what had been said. They then applied the decoder to the brain activity that occurred while the participants read the passages silently to themselves.

Despite the neural activity from imagined or actual speech differing slightly, the decoder was able to reconstruct which words several of the volunteers were thinking, using neural activity alone (Frontiers in Neuroengineering, doi.org/whb).

The algorithm isn’t perfect, says Stephanie Martin, who worked on the study with Pasley. “We got significant results but it’s not good enough yet to build a device.”

In practice, if the decoder is to be used by people who are unable to speak it would have to be trained on what they hear rather than their own speech. “We don’t think it would be an issue to train the decoder on heard speech because they share overlapping brain areas,” says Martin.

The team is now fine-tuning their algorithms, by looking at the neural activity associated with speaking rate and different pronunciations of the same word, for example. “The bar is very high,” says Pasley. “Its preliminary data, and we’re still working on making it better.”

The team have also turned their hand to predicting what songs a person is listening to by playing lots of Pink Floyd to volunteers, and then working out which neurons respond to what aspects of the music. “Sound is sound,” says Pasley. “It all helps us understand different aspects of how the brain processes it.”

“Ultimately, if we understand covert speech well enough, we’ll be able to create a medical prosthesis that could help someone who is paralysed, or locked in and can’t speak,” he says.

Several other researchers are also investigating ways to read the human mind. Some can tell what pictures a person is looking at, others have worked out what neural activity represents certain concepts in the brain, and one team has even produced crude reproductions of movie clips that someone is watching just by analysing their brain activity. So is it possible to put it all together to create one multisensory mind-reading device?

In theory, yes, says Martin, but it would be extraordinarily complicated. She says you would need a huge amount of data for each thing you are trying to predict. “It would be really interesting to look into. It would allow us to predict what people are doing or thinking,” she says. “But we need individual decoders that work really well before combining different senses.”