In a lab in Oxford University’s experimental psychology department, researcher Roi Cohen Kadosh is testing an intriguing treatment: He is sending low-dose electric current through the brains of adults and children as young as 8 to make them better at math.

A relatively new brain-stimulation technique called transcranial electrical stimulation may help people learn and improve their understanding of math concepts.

The electrodes are placed in a tightly fitted cap and worn around the head. The device, run off a 9-volt battery commonly used in smoke detectors, induces only a gentle current and can be targeted to specific areas of the brain or applied generally. The mild current reduces the risk of side effects, which has opened up possibilities about using it, even in individuals without a disorder, as a general cognitive enhancer. Scientists also are investigating its use to treat mood disorders and other conditions.

Dr. Cohen Kadosh’s pioneering work on learning enhancement and brain stimulation is one example of the long journey faced by scientists studying brain-stimulation and cognitive-stimulation techniques. Like other researchers in the community, he has dealt with public concerns about safety and side effects, plus skepticism from other scientists about whether these findings would hold in the wider population.

There are also ethical questions about the technique. If it truly works to enhance cognitive performance, should it be accessible to anyone who can afford to buy the device—which already is available for sale in the U.S.? Should parents be able to perform such stimulation on their kids without monitoring?

“It’s early days but that hasn’t stopped some companies from selling the device and marketing it as a learning tool,” Dr. Cohen Kadosh says. “Be very careful.”

The idea of using electric current to treat the brain of various diseases has a long and fraught history, perhaps most notably with what was called electroshock therapy, developed in 1938 to treat severe mental illness and often portrayed as a medieval treatment that rendered people zombielike in movies such as “One Flew over the Cuckoo’s Nest.”

Electroconvulsive therapy has improved dramatically over the years and is considered appropriate for use against types of major depression that don’t respond to other treatments, as well as other related, severe mood states.

A number of new brain-stimulation techniques have been developed, including deep brain stimulation, which acts like a pacemaker for the brain. With DBS, electrodes are implanted into the brain and, though a battery pack in the chest, stimulate neurons continuously. DBS devices have been approved by U.S. regulators to treat tremors in Parkinson’s disease and continue to be studied as possible treatments for chronic pain and obsessive-compulsive disorder.

Transcranial electrical stimulation, or tES, is one of the newest brain stimulation techniques. Unlike DBS, it is noninvasive.

If the technique continues to show promise, “this type of method may have a chance to be the new drug of the 21st century,” says Dr. Cohen Kadosh.

The 37-year-old father of two completed graduate school at Ben-Gurion University in Israel before coming to London to do postdoctoral work with Vincent Walsh at University College London. Now, sitting in a small, tidy office with a model brain on a shelf, the senior research fellow at Oxford speaks with cautious enthusiasm about brain stimulation and its potential to help children with math difficulties.

Up to 6% of the population is estimated to have a math-learning disability called developmental dyscalculia, similar to dyslexia but with numerals instead of letters. Many more people say they find math difficult. People with developmental dyscalculia also may have trouble with daily tasks, such as remembering phone numbers and understanding bills.

Whether transcranial electrical stimulation proves to be a useful cognitive enhancer remains to be seen. Dr. Cohen Kadosh first thought about the possibility as a university student in Israel, where he conducted an experiment using transcranial magnetic stimulation, a tool that employs magnetic coils to induce a more powerful electrical current.

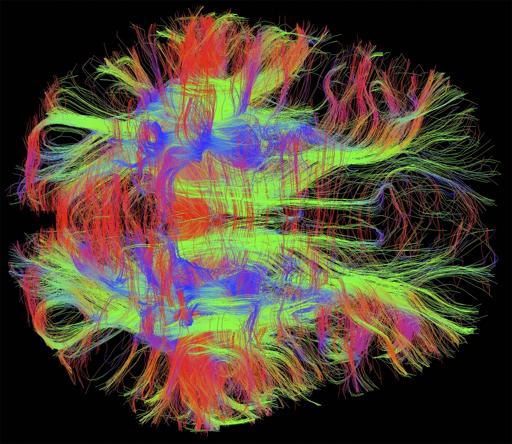

He found that he could temporarily turn off regions of the brain known to be important for cognitive skills. When the parietal lobe of the brain was stimulated using that technique, he found that the basic arithmetic skills of doctoral students who were normally very good with numbers were reduced to a level similar to those with developmental dyscalculia.

That led to his next inquiry: If current could turn off regions of the brain making people temporarily math-challenged, could a different type of stimulation improve math performance? Cognitive training helps to some extent in some individuals with math difficulties. Dr. Cohen Kadosh wondered if such learning could be improved if the brain was stimulated at the same time.

But transcranial magnetic stimulation wasn’t the right tool because the current induced was too strong. Dr. Cohen Kadosh puzzled over what type of stimulation would be appropriate until a colleague who had worked with researchers in Germany returned and told him about tES, at the time a new technique. Dr. Cohen Kadosh decided tES was the way to go.

His group has since conducted a series of studies suggesting that tES appears helpful improving learning speed on various math tasks in adults who don’t have trouble in math. Now they’ve found preliminary evidence for those who struggle in math, too.

Participants typically come for 30-minute stimulation-and-training sessions daily for a week. His team is now starting to study children between 8 and 10 who receive twice-weekly training and stimulation for a month. Studies of tES, including the ones conducted by Dr. Cohen Kadosh, tend to have small sample sizes of up to several dozen participants; replication of the findings by other researchers is important.

In a small, toasty room, participants, often Oxford students, sit in front of a computer screen and complete hundreds of trials in which they learn to associate numerical values with abstract, nonnumerical symbols, figuring out which symbols are “greater” than others, in the way that people learn to know that three is greater than two.

When neurons fire, they transfer information, which could facilitate learning. The tES technique appears to work by lowering the threshold neurons need to reach before they fire, studies have shown. In addition, the stimulation appears to cause changes in neurochemicals involved in learning and memory.

However, the results so far in the field appear to differ significantly by individual. Stimulating the wrong brain region or at too high or long a current has been known to show an inhibiting effect on learning. The young and elderly, for instance, respond exactly the opposite way to the same current in the same location, Dr. Cohen Kadosh says.

He and a colleague published a paper in January in the journal Frontiers in Human Neuroscience, in which they found that one individual with developmental dyscalculia improved her performance significantly while the other study subject didn’t.

What is clear is that anyone trying the treatment would need to train as well as to stimulate the brain. Otherwise “it’s like taking steroids but sitting on a couch,” says Dr. Cohen Kadosh.

Dr. Cohen Kadosh and Beatrix Krause, a graduate student in the lab, have been examining individual differences in response. Whether a room is dark or well-lighted, if a person smokes and even where women are in their menstrual cycle can affect the brain’s response to electrical stimulation, studies have found.

Results from his lab and others have shown that even if stimulation is stopped, those who benefited are going to maintain a higher performance level than those who weren’t stimulated, up to a year afterward. If there isn’t any follow-up training, everyone’s performance declines over time, but the stimulated group still performs better than the non-stimulated group. It remains to be seen whether reintroducing stimulation would then improve learning again, Dr. Cohen Kadosh says.

http://online.wsj.com/news/articles/SB10001424052702303650204579374951187246122?mod=WSJ_article_EditorsPicks&mg=reno64-wsj&url=http%3A%2F%2Fonline.wsj.com%2Farticle%2FSB10001424052702303650204579374951187246122.html%3Fmod%3DWSJ_article_EditorsPicks