A terrorist attack might seem like one of the least predictable of events. Terrorists work in small, isolated cells, often using simple weapons and striking at random. Indeed, the element of unpredictability is part of what makes terrorists so scary – you never know when or where they will strike.

However, new research shows that terror attacks may not be as unpredictable as people think. A paper by Stephen Tench and Hannah Fry, mathematicians at the University College London, and Paul Gill, a security and crime expert, shows that terrorist attacks often follow a general pattern that can be modeled and predicted using math.

Predicting human behavior is obviously a difficult thing to do, and one can’t always extrapolate from past events to predict the future. As one academic discussion of the topic points out, if you made a forecast in 1864 about how many presidents would be assassinated in office based on historical data, the expected number would be zero. But over the next 40 years, four U.S. presidents were killed in office.

Yet when you put individual human acts together and look at the aggregate, they often do follow a pattern that can be represented with math. As Sir Arthur Conan Doyle writes in “The Sign of Four,” the second Sherlock Holmes novel, “. . . while the individual man is an insoluble puzzle, in the aggregate he becomes a mathematical certainty.”

The Hawkes process

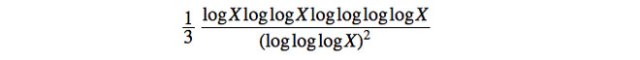

The mathematical model that Tench and Fry use to look at terrorist attacks is called a “Hawkes process.” The basic idea behind Hawkes processes is that some events don’t occur independently; when a certain event happens, you’re more likely to see other events of the same kind shortly thereafter. As time elapses, however, the probability of a subsequent event occurring gradually fades away and returns to normal.

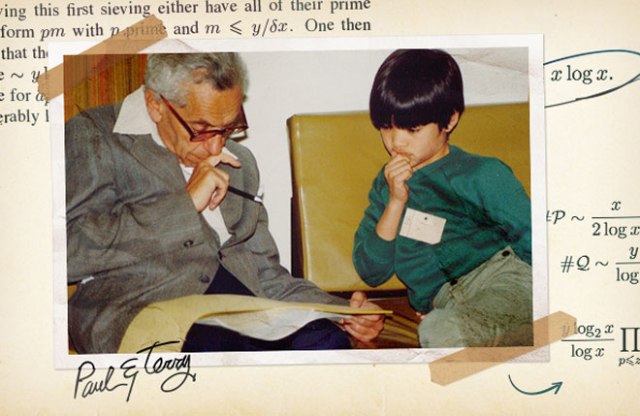

A mathematician named Alan Hawkes first developed the idea while searching for a mathematical model that would describe the patterns of earthquakes. Earthquake tremors aren’t independent events, either – after an earthquake hits, the area often experiences aftershocks. So Hawkes designed his equations to reflect the greater probability of experiencing a subsequent tremor shortly after the first one.

Since Hawkes developed the model in the 1970s, similar equations have been used to describe all kinds of sequences of related events, including how epidemics travel, how electrical impulses move through the brain, and how emails move through an organization. Recently, Hawkes processes have also been used to predict the locations and timings of burglaries and gang-related violence.

Why gang-related violence follows a Hawkes process is fairly easy to understand. A murder or shooting by one gang often provokes retaliation by another gang. So following the first incident, the probability of a second incident typically goes up.

It’s a little harder to understand why burglaries follow a Hawkes process – i.e., why one burglary would increase the chances of another burglary happening soon after. But, having your house burglarized does increase the chances that thieves will visit again. The burglars now know the location of your valuables and the layout of your house and your neighborhood, meaning your neighbors are more likely to be burglarized in the future, too.

Hawkes processes so accurately describe how trends in crime vary that some security companies and law enforcement bureaus have started to use them in their work. As Fry says, companies like PredPol monitor data on past crimes to model geographic “hotspots” that can be more heavily policed or can become the focus of specific crime-prevention policies.

Predicting terrorist attacks

In their paper, Tench, Fry and Gill apply this same model to terrorism in Northern Ireland. The paper looks at more than 5,000 explosions of improved explosive devices (IEDs) around Northern Ireland during a particularly violent time known as “the Troubles” between 1970 and 1998, when paramilitary groups in the mostly Catholic Northern Ireland fought to secede from Britain and join Ireland. The researchers used the process to analyze when and where one group, the Provisional Irish Republican Army (IRA), launched its terror attacks, how the British Security Forces responded, and how effective those responses were.

IED explosions follow a pattern. After one incident, others follow more quickly. So you have the ordinary chance of the event, but afterward you have a “little kick,” as Fry says, that increases the probability that you’ll have another attack – but then fades away over time. Mathematicians can capture and model these patterns using a Hawkes process equation. The math can reveal patterns in past terrorist activity that weren’t seen before, or be used to test different theories about those patterns, the researchers say. It can also create predictive models, which estimate the probability of future attacks at different times and in different areas.

The researchers say that their analysis shows distinct phases in the conflict between the Irish terrorists and authorities. For example, bombings slowed down as the IRA was infiltrated by British security forces and when more of its members were imprisoned, and bombings increased when the group launched a renewed campaign of violence or tried to use incidents of terrorism as a bargaining tool in negotiations.

One of the most fascinating lessons of the research is on the effects of counterterrorist operations. The paper shows evidence that the death of Catholic civilians, whom the IRA claimed to be representing, would cause the group to increase their IED attacks in retaliation.

That finding echoes previous research that looked at counterterrorism operations by the United States and its coalition partners in Iraq. That paper showed that counterinsurgency operations that were carried out indiscriminately – in other words, attacks that hurt or kill innocent people who were not necessarily insurgents — led to a backlash of terrorist violence. In contrast, counterinsurgency operations that were carried out in a discriminating, targeted way led to a lower level of violence than before.

The paper looks at events in the past, but Tench says the same technique can be used to project future trends. After one terrorist attack, and especially after civilians are killed, the likelihood of subsequent “aftershocks” increases for a specific time period, and authorities need to intervene quickly to avoid a long period of violence. They must also ensure their counterterrorism operations are targeted at the actual insurgents, to avoid provoking the destructive wave of violence that indiscriminate counterterrorism has been shown to do.

Tench says he hopes counterterrorism officials will start using the technique as part of their portfolio. “This application of the Hawkes process is a relatively new idea, so I imagine it might take some time to filter through,” he says.