Hundred of miles about Earth, orbiting satellites are becoming a bold new weapon in the age-old fight against drought, disease and death.

By Ariel Sabar

SMITHSONIAN MAGAZINE

In early October, after the main rainy season, Ethiopia’s central Rift Valley is a study in green. Fields of wheat and barley lie like shimmering quilts over the highland ridges. Across the valley floor below, beneath low-flying clouds, farmers wade through fields of African cereal, plucking weeds and primping the land for harvest

It is hard to look at such lushness and equate Ethiopia with famine. The f-word, as some people call it, as though the mere mention were a curse, has haunted the country since hundreds of thousands of Ethiopians died three decades ago in the crisis that inspired Live Aid, “We Are the World” and other spectacles of Western charity. The word was on no one’s lips this year. Almost as soon as I’d landed in Addis Ababa, people told me that 2014 had been a relatively good year for Ethiopia’s 70 million subsistence farmers.

But Gabriel Senay wasn’t so sure. A scientist with the U.S. Geological Survey, he’d designed a system that uses NASA satellites to detect unusual spikes in land temperature. These anomalies can signal crop failure, and Senay’s algorithms were now plotting these hot zones along a strip of the Rift Valley normally thought of as a breadbasket. Was something amiss? Something aid workers hadn’t noticed?

Senay had come to Ethiopia to find out—to “ground-truth” his years of painstaking research. At the top of a long list of people eager for results were officials at the U.S. Agency for International Development, who had made a substantial investment in his work. The United States is the largest donor of food aid to the world, splitting $1.5 billion to $2.5 billion a year among some 60 countries in Africa, Asia and Latin America. Ethiopia usually gets the biggest slice, but it’s a large pie, and to make sure aid gets to the neediest, USAID spends $25 million a year on scientific forecasts of where hunger will strike next.

Senay’s innovations, some officials felt, had the potential to take those forecasts to a new level, by spotting the faintest first footsteps of famine almost anywhere in the world. And the earlier officials heard those footsteps, the faster they would be able to mobilize forces against one of humanity’s oldest and cruelest scourges.

In the paved and wired developed world, it’s hard to imagine a food emergency staying secret for long. But in countries with bad roads, spotty phone service and shaky political regimes, isolated food shortfalls can metastasize into full-blown humanitarian crises before the world notices. That was in many ways the case in Ethiopia in 1984, when the failure of rains in the northern highlands was aggravated by a guerrilla war along what is now the Eritrean border.

Senay, who grew up in Ethiopian farm country, the youngest of 11 children, was then an undergraduate at the country’s leading agricultural college. But the famine had felt remote even to him. The victims were hundreds of miles to the north, and there was little talk of it on campus. Students could eat injera—the sour pancake that is a staple of Ethiopian meals—just once a week, but Senay recalls no other hardships. His parents were similarly spared; the drought had somehow skipped over their rainy plateau.

That you could live in one part of a country and be oblivious to mass starvation in another: Senay would think about that a lot later.

The Great Rift Valley splits Ethiopia into nearly equal parts, running in a ragged diagonal from the wastelands of the Danakil Depression in the northeast to the crocodile haunts of Lake Turkana in the southwest. About midway along its length, a few hours’ drive south of Addis, it bisects a verdant highland of cereal fields.

Senay, who is 49, sat in the front seat of our Land Cruiser, wearing a baseball cap lettered, in cursive, “Life is Good.” Behind us were two other vehicles, shuttling half a dozen American and Ethiopian scientists excited enough by Senay’s research to want to see its potential firsthand. We caravanned through the gritty city of Adama and over the Awash River, weaving through cavalcades of donkeys and sheep.

Up along the green slopes of the Arsi highlands, Senay looked over his strangely hued maps. The pages were stippled with red and orange dots, each a square kilometer, where satellites 438 miles overhead had sensed a kind of fever on the land.

From the back seat, Curt Reynolds, a burly crop analyst with the U.S. Department of Agriculture in Washington, who advises USAID (and is not known to sugar-coat his opinions), asked whether recent rains had cooled those fevers, making some of Senay’s assessments moot. “There are still pixels that are really hurting,” Senay insisted.

We turned off the main road, jouncing along a muddy track to a local agricultural bureau. Huseen Muhammad Galatoo, a grave-looking man who was the bureau’s lead agronomist, led us into a musty office. A faded poster on one wall said, “Coffee: Ethiopia’s Gift to the World.”

Galatoo told us that several Arsi districts were facing their worst year in decades. A failure of the spring belg rains and a late start to the summer kiremt rains had left some 76,000 animals dead and 271,000 people—10 percent of the local population—in need of emergency food aid.

“Previously, the livestock used to survive somehow,” Galatoo said, through an interpreter. “But now there is literally nothing on the ground.”

In the face of such doleful news, Senay wasn’t in the mood for self-congratulation. But the truth was, he’d nailed it. He’d shown that satellites could spot crop failure—and its effects on livestock and people—as never before, at unprecedented scale and sensitivity. “The [current] early warning system didn’t fully capture this,” Alemu Asfaw, an Ethiopian economist who helps USAID forecast food crises, said in the car afterwards, shaking his head. “There had been reports of erratic rainfall. But no one expected it to be that bad.” No one, that is, but Senay, whose work, Reynolds said, could be “a game changer for us.”

Satellites have come a long way since Russia’s Sputnik 1—a beachball-size sphere with four chopstick-like radio antennas—entered orbit, and history, in 1957. Today, some 1,200 artificial satellites orbit Earth. Most are still in traditional lines of work: bouncing phone calls and television signals across the globe, beaming GPS coordinates, monitoring weather, spying. A smaller number watch over the planet’s wide-angle afflictions, like deforestation, melting glaciers and urban sprawl. But only recently have scientists sicced satellites on harder-to-detect, but no less perilous threats to people’s basic needs and rights.

Senay is on the leading edge of this effort, focusing on hunger and disease—ills whose solutions once seemed resolutely earthbound. Nomads searching for water, villagers battling malaria, farmers aching for rain: When they look to the heavens for help, Senay wants satellites looking back.

He was born in the northwest Ethiopian town of Dangila, in a house without electricity or plumbing. To cross the local river with his family’s 30 cattle, little Gabriel clung to the tail of an ox, which towed him to the grazing lands on the other side. High marks in school—and a father who demanded achievement, who called Gabriel “doctor” while the boy was still in diapers—propelled him to Ethiopia’s Haramaya University and then to the West, for graduate studies in hydrology and agricultural engineering.

Not long after earning a PhD at Ohio State University, he landed a job that felt more like a mission—turning American satellites into defenders of Africa’s downtrodden. His office, in the South Dakota countryside 18 miles northeast of Sioux Falls, is home to the Earth Resources Observation and Science Center, a low building, ringed by rows of tinted windows, looking a bit like a spaceship that emergency-landed in some hapless farmer’s corn and soybean spread. Run by the U.S. Geological Survey, it’s where the planet gets a daily diagnostic exam. Giant antennas and parabolic dishes ingest thousands of satellite images a day, keeping an eye on the pulse of the planet’s waters, the pigment of its land and the musculature of its mountains.

Senay was soon living the American dream, with a wife, two kids and minivan in a Midwestern suburb. But satellites were his bridge home, closing the distance between here and there, now and then. “I came to know more about Ethiopia in South Dakota when looking at it from satellites than I did growing up,” he told me. As torrents of data flow through his calamity-spotting algorithms, he says, “I imagine the poor farmer in Ethiopia. I imagine a guy struggling to farm who never got a chance to get educated, and that kind of gives me energy and some bravery.”

His goal from the outset was to turn satellites into high-tech divining rods, capable of finding water—and mapping its effects—across Africa. Among scientists who study water’s whereabouts, Senay became a kind of rock star. Though nominally a bureaucrat in a remote outpost of a federal agency, he published in academic journals, taught graduate-level university courses and gave talks in places as far-flung as Jordan and Sri Lanka. Before long, people were calling from all over, wanting his algorithms for their own problems. Could he look at whether irrigation in Afghanistan’s river basins was returning to normal after years of drought and war? What about worrisome levels of groundwater extraction in America’s Pacific Northwest? Was he free for the National Water Census?

He’d started small. A man he met on a trip to Ethiopia told him that 5,200 people had died of malaria in three months in a single district in the Amhara region. Senay wondered if satellites could help. He requested malaria case data from clinics across Amhara and then compared them with satellite readings of rainfall, land greenness and ground moisture—all factors in where malaria-carrying mosquitoes breed. And there it was, almost like magic: With satellites, he could predict the location, timing and severity of malaria outbreaks up to three months in advance. “For prevention, early warning is very important for us,” Abere Mihretie, who leads an anti-malaria group in Amhara, told me. With $2.8 million from the National Institutes of Health, Senay and Michael Wimberly, an ecologist at South Dakota State University, built a website that gives Amhara officials enough early warning to order bed nets and medicines and to take preventive steps such as draining standing water and counseling villagers. Mihretie expects the system—which will go live this year—to be a lifesaver, reducing malaria cases by 50 to 70 percent.

Senay had his next epiphany on a work trip to Tanzania in 2005. By the side of the road one day, he noticed cattle crowding a badly degraded water hole. It stirred memories of childhood, when he’d watched cows scour riverbeds for trickles of water. The weakest got stuck in the mud, and Senay and his friends would pull them out. “These were the cows we grew up with, who gave us milk,” he says. “You felt sorry.”

Senay geo-tagged the hole in Tanzania, and began reading about violent conflict among nomadic clans over access to water. One reason for the conflicts, he learned, was that nomads were often unaware of other, nearby holes that weren’t as heavily used and perhaps just as full of water.

Back in South Dakota, Senay found he could see, via satellite, the particular Tanzania hole he’d visited. What’s more, it gave off a distinct “spectral signature,” or light pattern, which he could then use to identify other water holes clear across the African Sahel, from Somalia to Mali. With information about topography, rainfall estimates, temperature, wind speed and humidity, Senay was then able to gauge how full each hole was.

Senay and Jay Angerer, a rangeland ecologist at Texas A&M University, soon won a $1 million grant from NASA to launch a monitoring system. Hosted on a U.S. Geological Survey website, it tracks some 230 water holes across Africa’s Sahel, giving each a daily rating of “good,” “watch,” “alert” or “near dry.” To get word to herders, the system relies on people like Sintayehu Alemayehu, of the aid group Mercy Corps. Alemayehu and his staff meet with nomadic clans at village markets to relay a pair of satellite forecasts—one for water-hole levels, another for pasture conditions. But such liaisons may soon go the way of the switchboard operator. Angerer is seeking funding for a mobile app that would draw on a phone’s GPS to lead herders to water. “Sort of like Yelp,” he told me.

Senay was becoming a savant of the data workaround, of the idea that good enough is sometimes better than perfect. Doppler radar, weather balloons, dense grids of electronic rain gauges simply don’t exist in much of the developing world. Like some MacGyver of the outback, Senay was proving an “exceptionally good detective” in finding serviceable replacements for laboratory-grade data, says Andrew Ward, a prominent hydrologist who was Senay’s dissertation adviser at Ohio State. In remote parts of the world, Ward says, even good-enough data can go a long way toward “helping solve big important issues.”

And no issue was more important to Senay than his homeland’s precarious food supply.

Ethiopia’s poverty rate is falling, and a new generation of leaders has built effective programs to feed the hungry in lean years. But other things have been slower to change: 85 percent of Ethiopians work the land as farmers or herders, most at the subsistence level, and less than 1 percent of agricultural land is irrigated. That leaves Ethiopia, the second most populous country in Africa, at the mercy of the region’s notoriously fickle rains. No country receives more global food aid.

Famine appears in Ethiopia’s historical record as early as the ninth century and recurs with an almost tidal regularity. The 1973 famine, which killed tens of thousands, led to the overthrow of Emperor Haile Selassie and the rise of an insurgent Marxist government known as the Derg. The 1984 famine helped topple the Derg.

Famine often has multiple causes: drought, pestilence, economies overdependent on agriculture, antiquated farming methods, geographic isolation, political repression, war. But there was a growing sense in the latter decades of the 20th century that science could play a role in anticipating—and heading off—its worst iterations. The United Nations started a basic early-warning program in the mid-1970s, but only after the 1980s Ethiopian crisis was a more rigorously scientific program born: USAID’s Famine Early Warning Systems Network (FEWS NET).

Previously, “a lot of our information used to be from Catholic priests in, like, some little mission in the middle of Mali, and they’d say, ‘My people are starving,’ and you’d kind of go, ‘Based on what?’” Gary Eilerts, a veteran FEWS NET official, told me. Missionaries and local charities could glimpse conditions outside their windows, but had little grasp of the broader severity and scope of suffering. Local political leaders had a clearer picture, but weren’t always keen to share it with the West, and when they did, the West didn’t always trust them.

The United States needed hard, objective data, and FEWS NET was tasked with gathering it. To complement their analyses of food prices and economic trends, FEWS NET scientists did use satellites, to estimate rainfall and monitor land greenness. But then they heard about a guy in small-town South Dakota who looked like he was going one better.

Senay knew that one measure of crop health was the amount of water a field gave off: its rate of “evapotranspiration.” When plants are thriving, water in the soil flows up roots and stems into leaves. Plants convert some of the water to oxygen, in photosynthesis. The rest is “transpired,” or vented, through pores called stomata. In other words, when fields are moist and crops are thriving, they sweat.

Satellites might not be able to see the land sweat, but Senay wondered if they could feel it sweat. That’s because when water in soil or plants evaporates, it cools the land. Conversely, when a lush field takes a tumble—whether from drought, pests or neglect—evapotranspiration declines and the land heats. Once soil dries to the point of hardening and cracking, its temperature is as much as 40 degrees hotter than it was as a well-watered field.

NASA’s Aqua and Terra satellites carry infrared sensors that log the temperature of every square kilometer of earth every day. Because those sensors have been active for more than a decade, Senay realized that a well-crafted algorithm could flag plots of land that got suddenly hotter than their historical norm. In farming regions, these hotspots could be bellwethers of trouble for the food supply.

Scientists had studied evapotranspiration with satellites before, but their methods were expensive and time-consuming: Highly paid engineers had to manually interpret each snapshot of land. That’s fine if you’re interested in one tract of land at one point in time.

But what if you wanted every stitch of farmland on earth every day? Senay thought he could get there with a few simplifying assumptions. He knew that when a field was perfectly healthy—and thus at peak sweat—land temperature was a near match for air temperature. Senay also knew that a maximally sick field was a fixed number of degrees hotter than a maximally healthy one, after tweaking for terrain type.

So if he could get air temperature for each square kilometer of earth, he’d know the coldest the land there could be at that time. By adding that fixed number, he’d also know the hottest it could be. All he needed now was NASA’s actual reading of land temperature, so he could see where it fell within those theoretical extremes. That ratio told you how sweaty a field was—and thus how healthy.

Senay found good air temperature datasets at the National Oceanic and Atmospheric Administration and the University of California, Berkeley. By braiding the data from NASA, NOAA and Berkeley, he could get a computer to make rapid, automated diagnoses of crop conditions anywhere in the world. “It’s data integration at the highest level,” he told me one night, in the lobby of our Addis hotel.

The results might be slightly less precise than the manual method, which factors in extra variables. But the upsides—how much of the world you saw, how fast you saw it, how little it cost—wasn’t lost on his bosses. “Some more academically oriented people reach an impasse: ‘Well, I don’t know that, I can’t assume that, therefore I’ll stop,’” says James Verdin, his project leader at USGS, who was with us in the Rift Valley. “Whereas Gabriel recognizes that the need for an answer is so strong that you need to make your best judgment on what to assume and proceed.” FEWS NET had just one other remote test of crop health: satellites that gauge land greenness. The trouble is that stressed crops can stay green for weeks, before shading brown. Their temperature, on the other hand, ticks up almost immediately. And unlike the green test, which helps only once the growing season is underway, Senay’s could read soil moisture at sowing time.

The Simplified Surface Energy Balance model, as it is called, could thus give officials and aid groups several weeks’ more lead time to act before families would go hungry and livestock would begin to die. Scientists at FEWS NET’s Addis office email their analyses to 320 people across Ethiopia, including government officials, aid workers and university professors.

Biratu Yigezu, acting director general of Ethiopia’s Central Statistical Agency, told me that FEWS NET fills key blanks between the country’s annual door-to-door surveys of farmers. “If there’s a failure during planting stage, or if there’s a problem in the flowering stage, the satellites help, because they’re real time.”

One afternoon in the Rift Valley, we pulled the Land Cruisers alongside fields of slouching corn to speak with a farmer. Tegenu Tolla, who was 35, wore threadbare dress pants with holes at the knees and a soccer jersey bearing the logo of the insurance giant AIG. He lives with his wife and three children on whatever they can grow on their two and a half acre plot.

This year was a bust, Tolla told Senay, who chats with farmers in his native Amharic. “The rains were not there.” So Tolla waited until August, when some rain finally came, and sowed a short-maturing corn with miserly yields. “We will not even be able to get our seeds back,” Tolla said. His cattle had died, and to feed his family, Tolla had been traveling to Adama for day work on construction sites.

We turned onto a lumpy dirt road, into a field where many of the teff stalks had grown just one head instead of the usual six. (Teff is the fine grain used to make injera.) Gazing at the dusty, hard-packed soil, Senay had one word: “desertification.”

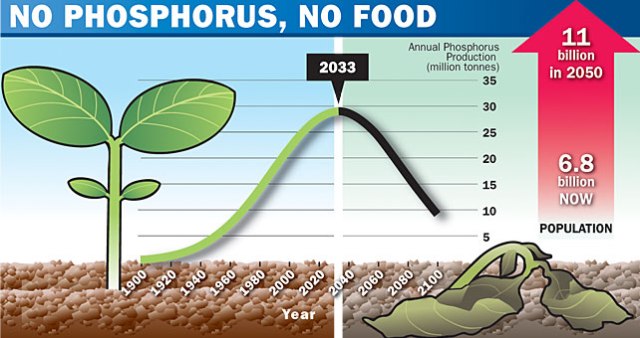

The climate here was indeed showing signs of long-term change. Rainfall in the south-central Rift Valley has dropped 15 to 20 percent since the mid-1970s, while the population—the number of mouths to feed—has mushroomed. “If these trends persist,” FEWS NET wrote in a 2012 report, they “could leave millions more Ethiopians exposed to hunger and undernourishment.”

Over the next few days we spiraled down from the highlands into harder-hit maize-growing areas and finally into scrublands north of the Kenyan border, a place of banana plantations and roadside baboons and throngs of cattle, which often marooned our vehicles. At times, the road seemed a province less of autos than of animals and their child handlers. Boys drove battalions of cows and sheep, balanced jerrycans of water on their shoulders and stood atop stick-built platforms in sorghum fields, flailing their arms to scare off crop-devouring queleas, a type of small bird.

Almost everywhere we stopped we found grim alignments between the red and orange dots on Senay’s maps and misery on the ground. Senay was gratified, but in the face of so much suffering, he wanted to do more. Farmers knew their own fields so well that he wondered how to make them players in the early warning system. With a mobile app, he thought, farmers could report on the land beneath their feet: instant ground-truthing that could help scientists sharpen their forecasts.

What farmers lacked was the big picture, and that’s what an app could give back: weather predictions, seasonal forecasts, daily crop prices in nearby markets. Senay already had a name: Satellite Integrated Farm Information, or SIFI. With data straight from farmers, experts in agricultural remote sensing, without ever setting foot on soil, would be a step closer to figuring out exactly how much food farmers could coax from the land.

But soil engulfed us now—it was in our boots, beneath our fingernails—and there was nothing to do but face farmers eye to eye.

“Allah, bless this field,” Senay said to a Muslim man, who’d told us of watching helplessly as drought killed off his corn crop.

“Allah will always bless this field,” the man replied. “We need something more.”