Thanks to SRW for bringing this to the attention of the It’s Interesting community.

Category: SRW

Netflix has a plan to rewire our entire culture

Given all the faces you see glued to computers, tablets, and cell phones, you might think that people watch much less television than they used to. You would be wrong. According to Nielsen, Americans on average consume nearly five hours of TV every day, a number that has actually gone up since the 1990s. That works out to about 34 hours a week and almost 1,800 hours per year, more than the average French person spends working. The vast majority of that time is still spent in front of a standard television, watching live or prescheduled programming. Two decades into the Internet revolution, despite economic challenges and cosmetic upgrades, the ancient regime survives, remaining both the nation’s dominant medium and one of its most immutable.

And that’s why what Netflix is trying to do is so audacious. For the past two years, the Silicon Valley company has been making a major push into original programming, putting out an ambitious slate of shows that have cost Netflix, which had profits of $17 million in 2012, hundreds of millions of dollars. Because of the relative quality of some of those series, such as “House of Cards” (a multiple Emmy winner) and “Orange Is the New Black,” they’ve been widely interpreted as part of an attempt to become another HBO. Because every episode of every show is made available to watch right away, they’re also seen as simply a new twist in on-demand viewing. But in fact the company has embarked upon a venture more radical than any before it. It may even be more radical than Netflix itself realizes.

History has shown that minor changes in viewing patterns can have enormous cultural spillovers. CNN can average as few as 400,000 viewers at any given moment—but imagine what the country might be like if cable news had never come along. Netflix’s gambit, aped by Amazon Studios and other imitators, is to replace the traditional TV model with one dictated by the behaviors and values of the Internet generation. Instead of feeding a collective identity with broadly appealing content, the streamers imagine a culture united by shared tastes rather than arbitrary time slots. Pursuing a strategy that runs counter to many of Hollywood’s most deep-seated hierarchies and norms, Netflix seeks nothing less than to reprogram Americans themselves. What will happen to our mass culture if it succeeds?

Vladimir Nabokov believed that humanity’s highest yearning ought to be to leave behind any desire to be up-to-date, to be unconcerned with what is happening now. As he put it in his notes to Pale Fire: “Time without consciousness—lower animal world; time with consciousness—man; consciousness without time—some still higher state.”

The business of entertainment has not generally shared Nabokov’s view. It values timeliness above all, creating a hierarchy so fundamental that it resembles natural law: New is better than old, live trumps prerecorded, original episodes always beat reruns. That’s overwhelmingly obvious in sports and news, and accounts for the manufactured ephemerality of reality and talent shows. Yet it is also implicit in dramas and sitcoms, with their premieres, finite seasons, and finales. The rule holds fast for film as well. From its opening weekend in major theaters, through nearly two years of “release windows,” a movie drifts downward through airlines, hotels, DVDs, cable and network television, and the Internet, decaying in perceived worth.

The desire to be current is in some sense human nature. But when it comes to viewing choices, it also arises from the specific history and revenue model of the entertainment business. In its early years, television was necessarily live, for the technology of broadcasting preceded effective and cheap recording technologies. The first popular shows, like “Amos ‘n’ Andy,” were short serial dramas designed to keep audiences on a fixed daily schedule, each episode ending with some aspect of the plot unresolved. If you missed an installment, you missed it forever and might lose the big story in the bargain.

In normal markets, the most popular products aren’t necessarily the most profitable (think Louis Vuitton). But on network television, where the prices charged for advertising depend on ratings, comparative popularity matters a lot. If some sense of newness or urgency can get viewers from the desired demographic to tune in to one channel rather than another, that can make the difference between success or failure. The upshot is a business whose highest ambition is to get enormous groups of people watching the same thing at the same time: “event television.”

Atop this eventocracy are productions like the Super Bowl or the Oscars, which by managing to grab much of the nation therefore command the highest ad rates, about $4 million and $2 million, respectively. That compares with the $77,000 per spot that “30 Rock,” a smart but under-watched series, commanded at the end of its run. The premium on audience size orients creative decisions toward an ideal embodied by a Jay Leno monologue, avoidant of controversy or anything too weird or challenging. TV shows, in the words of economist Harold Vogel, are “scheduled interruptions of marketing bulletins.” And television itself, as Walter Lippmann, a founder of this magazine, put it, has long been “the creature, the servant, and indeed the prostitute, of merchandising.”

The Internet—while it has its own desires for attention—has always been a different animal. One way or another, it tends to thwart efforts to gather lots of users at the same time and same virtual place. The first “YouTube Music Awards,” stuffed with celebrities, attracted 220,000 live viewers, compared with more than ten million for MTV’s version. During the men’s 100-meter-dash final at the London Olympics, Web viewers groaned as NBC’s Web video lapsed into “buffer mode.”

Online, people are far more loyal to their interests and obsessions than an externally imposed schedule. While they may end up seeing the same stuff as other viewers, it happens incrementally, through recommendation algorithms and personal endorsements relayed over Twitter feeds, Facebook posts, and e-mails. New content is like snowfall, some of it melting away, some of it sticking and gradually accumulating. The YouTube Music Awards may have been a bust as a live show, but within two weeks, the production had racked up 3.5 million views.

When I spoke with Netflix CEO Reed Hastings in August, I noticed a subtle but significant shift in nomenclature: He had begun to refer to the company not as a tech start-up or a new media venture, but instead as a “network.” To claim that mantle is not a trivial thing. The National Broadcasting Corporation (NBC) pioneered our understanding of what the concept means back in 1926, when it partnered with AT&T to create the first lasting national broadcast network. Until then, American home entertainment had been necessarily local—radio stations, technologically, only reached their host city or community. The founding idea of NBC was to offer a single, higher-quality product to the whole country. It was an idea of a piece with the late 1920s and 1930s, before fascism became unfashionable and nationalism was all the rage. A mighty and unifying medium fit with an era during which Fortune would praise Benito Mussolini as presenting “the virtue of force and centralized government acting without conflict for the whole nation at once.” The national network was an effective way to put people on a common daily cultural diet.

Claiming network status was also a bold move for Netflix just based on its track record alone; not so long ago, it was a struggling DVD rental company whose cachet came from its distinctive red envelopes and pretty good website. One wonders how much the shift really was planned, a question on which Hastings and chief content officer Ted Sarandos disagree. “When I met Reed in 1999,” Sarandos told me, “part of our first conversations were about the potential for original programming.” Hastings demurs, calling that “generous.” “What was planned all along was really just the evolution to streaming,” he says, “and thus the name of the company: Netflix, not DVDbymail.com.”

Netflix’s transition from delivering movies and TV shows through the U.S. Postal Service to beaming them over high-speed connections began in 2007 and is a well-known story. Less known is how Netflix found its way into the content business, a risky move that has embarrassed many tech firms. In the ’90s, Panasonic, a capable-enough maker of camcorders, acquired a precursor to Universal, only to have to jettison the entertainment studio a few year later. Around the same time, Microsoft, then at its most flush, blew billions creating content that for the most part vanished so fast that not even a Bing search could find it today.

But Netflix, without grabbing many headlines, had actually spent a long time preparing for its current chapter. While it is headquartered in Silicon Valley, the company opened an office in Beverly Hills in 2002, a bid to achieve a certain California bilingualism. Sarandos, who has overseen that southern outpost, spent his formative years working in a strip-mall video-rental shop and is upbeat and easy to talk to. He is a fluent Southern Californian, unlike Hastings, who’s known for his impatience with slow or muddy thinkers.

Throughout the early 2000s, Sarandos experimented with small content deals. Once, while attending a software convention, he ran into a guy named Stu Pollard who had self-financed a romantic comedy named Nice Guys Sleep Alone, the many extra copies of which he now had stored in his garage.

“He gave me his movie, and he said, ‘I’ve got ten thousand of these if you’re interested,’ ” Sarandos recalls. He watched the film, decided it wasn’t terrible, or at least “on par with a lot of the romantic comedies we were distributing.” Sarandos agreed to take 500 discs, pursuant to a revenue share, creating what might be called the first Netflix semi-exclusive.

Sarandos’s acquisitions budget, originally $100,000 a year, swelled as Netflix became a regular at Sundance and other film festivals. Under the name Red Envelope Entertainment, Netflix bought the rights to independent films such as Born into Brothels, a 2004 documentary about the children of Calcutta prostitutes (it won an Academy Award), and Super High Me, a documentary about the effects of smoking weed heavily for a month (sperm count and verbal SAT scores both went up; math scores suffered). When it acquired these films, Netflix added them to its own catalogue but did not keep the content entirely for itself, instead trying to distribute it as widely as possible. For Super High Me, that included sponsoring viewing parties for stoners.

In 2008, after acquiring about 115 films, Netflix folded Red Envelope and let go of several employees. Sarandos, at the time, gave good reasons for Netflix’s retreat. “The best role we play,” he said, “is connecting the film to the audience, not as a financier, not as a producer, not as an outside distributor or marketer.” It was the statement of a tech company sobering up.

Yet just a few years later, Netflix abruptly reversed course again. The company had finally passed 20 million subscribers. To thrive over the long term, it would need many more. At the same time, it now had enough scale to try a different way of using new content to lure them. “When a big company does a little bit of music, or a little bit of video, and it’s not essential to their future, it’s almost assured that they won’t do it well,” says Hastings. “It’s a dabble.” To make its first major original series, Netflix shelled out $100 million. It would not be dabbling.

In 2011, when independent studio Media Rights Capital shopped the American remake of a modestly successful British political drama named “House of Cards,” Netflix didn’t bother to attend its presentation to the networks. Instead, Sarandos got in touch with David Fincher, the Oscar-winning director of The Social Network, who’d been tapped to make the show. “We want the series,” Sarandos told him, “and I’m going to pitch you on why you should sell it to us.” Aware of the challenge of convincing a famous auteur to bring his talents to a medium more commonly known for cat videos, Netflix promised a lot: Fincher, though he’d never directed a TV series before, would enjoy enormous creative control. And rather than putting the show through the normal pilot process, the company would commit to two 13-episode seasons up front. It was nearly as aggressive with “Orange Is the New Black,” ordering a second season of the show—a subversive drama set in a women’s prison, featuring a notably motley cast—before the first was even available.

If those were big gambles, they were also calculated ones. Whatever it calls itself, Netflix still has tech-company DNA; its game, in part, is data. Much more so than a network that reaches viewers through a third-party cable operator like Comcast or Time Warner, it knows what its customers actually like and how they behave. To the consternation of entertainment reporters, Netflix never reveals just what its numbers say (or anything resembling ratings), but Sarandos says its process for “House of Cards” worked roughly like this: “We read lots of data to figure out how popular Kevin Spacey was over his entire output of movies. How many people actually highly rate four or five of them?” Then his team did the same for David Fincher. If you liked The Social Network, The Curious Case of Benjamin Button, and Fight Club, “you’re probably a Fincher fan—you probably don’t know it, but you are,” he says. Once the company has a sense of how many fans are out there, it can “more accurately predict the absolute market size for a show.” And when you can do that, you don’t have to worry about pandering to, or offending, the masses.

Right now, American viewers are averaging only about 45 minutes of Internet-streaming video per week, a blip in comparison with total television intake. Given that audiences trained for decades to respond to event-driven television, how realistic is it to expect more viewers to shift from traditional TV? John Steinbeck offered one answer: “It’s a hard thing to leave any deeply routined life, even if you hate it.” Any historian of consumer technology would add that machines change much faster than people.

Television in particular moves so slowly that the last time the concept of the network really came up for grabs was the late ’70s. That’s when Ted Turner (the Turner Broadcasting System), Pat Robertson (the Christian Broadcast Network), and the founders of HBO successfully used satellites to begin to beam programming to cable subscribers. The ensuing frenzy resulted in the launch of a dozen networks, including ESPN, MTV, CNN, Discovery, and Bravo. Most of those channels are still around, not necessarily because of the strength of their programming, but because the reigning content hierarchy has been so entrenched.

Netflix believes it has a powerful factor in its favor as it tries to change viewers’ habits. “Human beings like control,” says Sarandos. “To make all of America do the same thing at the same time is enormously inefficient, ridiculously expensive, and most of the time, not a very satisfying experience.” There is a freedom achieved when your options extend beyond that night’s offerings and the limited selection of past episodes that networks make available on demand. Specifically, it’s the freedom to only watch television you really enjoy. The crude novelty factor that compels people to try “Whitney” or “Smash” ultimately yields a lot of disappointed and frustrated viewers. An old episode of “Freaks and Geeks” or “The West Wing” might in fact be more worth your time—a message Netflix has pressed in a recent ad campaign promoting its collections of classic series and cult hits. Eventually—or so goes the strategy—people won’t be able to imagine having their options defined by a programming grid. Not coincidentally, Netflix has been vying with Amazon to become the premiere source of streaming series for young children, for whom having to wait for new episodes of their favorite shows to air is unfathomable.

While Netflix’s first few original series have been aimed at connoisseurs of high- or at least upper-middle-brow fare, its philosophy might be best captured by its co-production of “Derek,” a show that skews toward less sophisticated sensibilities. “Derek”is a Ricky Gervais sitcom revolving around the staff and residents of a small nursing home in England. The show, to put it mildly, does not have the usual indicia of widespread appeal. It might be described as the opposite of “Baywatch”—the setting is bleak, the stars ugly and often annoying, the dialogue sometimes incomprehensible to American ears.

And then there’s Gervais himself. He did serve for three years as the host of the Golden Globes, a paragon of event programming and water-cooler culture. Yet in that role, he was deemed a failure, his humor too edgy and offensive. Selling the masses on a series featuring Gervais playing the part of a weird man with greasy hair who likes hamster videos would be a losing proposition—but that’s not what Netflix is doing. To the company, it doesn’t matter if you’ve never heard of the show, or even know anyone who has. All that matters is that it wins the approval of Gervais loyalists, whom, the data must show, are a large enough Netflix population to justify the investment. Similarly, the names Luke Cage and Jessica Jones may mean nothing to you, but they do to comic-book fans, which is why Netflix just worked out a deal to create series based on them and two other Marvel superheroes.

Netflix’s transformation would of course be impossible without the path blazed by premium cable. HBO pioneered the subscription-fee model (though it collects from cable companies rather than directly from consumers) and its success made possible the specialized programming on other premium networks, like AMC and the rest. The DVD box set gave hard-core enthusiasts the first taste of the binge-viewing that is a Netflix trademark. The company’s achievement is to bring it all together and target the entire TV-watching population—not just a few selected die-hards, but every individual based on his or her own interests and obsessions.

And from that a picture of the not-too-distant TV future emerges. What remains of live programming is reserved for sports programming, breaking news stories, talent contests, and the big awards shows. Nearly all scripted shows become streaming shows, whether they are produced or aggregated by Netflix or Amazon, CBS or a (finally unbundled) HBO—or even an unexpected entrant such as Target, which recently launched a Netflix competitor. The new networks compete based on the their ability to make the right original programming decisions and secure the best old shows, as well as the prescience of their recommendation engines. But ultimately they’re all just selling access to piles of content to be perused at the viewer’s desire. Oddly enough, it’s a vision that actually makes television a lot more like the rest of retail. Or, more specifically, not unlike the old-style video-rental stores where Sarandos started his career, but super-sized for a new era.

Through the sheer number of hours watched and the dictation of evening routines—not to mention the way people orient entire rooms around the shiny screens placed at the center of their homes—network television played a singular role in creating American mass culture over the last 60 years. It now does the same in sustaining its vestiges. In the absence of a generation-defining genre—the rock of the 1960s, the rap of the 1990s—today’s pop hits flit through radio dials and iTunes playlists, catchy but ephemeral. Blockbuster movies and books are few these days, and the windows during which they command widespread attention brief. But television, despite the fragmenting influence of the Web and proliferating cable channels, continues to bind us more than any other medium. That’s why, should Netflix and the other streamers even partially succeed at redefining the network as we know it, the effects will be so profound.

If modern American popular culture was built on a central pillar of mainstream entertainment flanked by smaller subcultures, what stands to replace it is a very different infrastructure, one comprising islands of fandom. With no standard daily cultural diet, we’ll tilt even more from a country united by shows like “I Love Lucy” or “Friends” toward one where people claim more personalized allegiances, such as to the particular bunch of viewers who are obsessed with “Game of Thrones” or who somehow find Ricky Gervais unfailingly hysterical, as opposed to painfully offensive.

The baby-boomer intellectuals who lament the erosion of shared values are right: Something will be lost in the transition. At the water cooler or wedding reception or cocktail party or kid’s soccer game, conversations that were once a venue for mutual experiences will become even more strained as chatter about last night’s overtime thriller or “Seinfeld” shenanigans is replaced by grasping for common ground. (“Have you heard of ‘The Defenders’? Yeah? What episode are you on?”) At a deeper level, a country already polarized by the echo chambers of ideologically driven journalism and social media will find itself with even less to agree on.

But it’s not all cause for dismay. Community lost can be community gained, and as mass culture weakens, it creates openings for the cohorts that can otherwise get crowded out. When you meet someone with the same particular passions and sensibility, the sense of connection can be profound. Smaller communities of fans, forged from shared perspectives, offer a more genuine sense of belonging than a national identity born of geographical happenstance.

Whether a future based fundamentally on fandom is superior in any objective sense is impossible to say. But it’s worth keeping in mind that the whole idea of one great entertainment medium that unites the country isn’t really that old a tradition, particularly American, nor necessarily noble. We may come to remember it as a twentieth-century quirk, born of particular business models and an obsession with national unity indelibly tied to darker projects. The whole ideal of “forging one people” is not entirely benevolent and has always been at odds with a country meant to be the home of the free.

Certainly, a culture where niche supplants mass hews closer to the original vision of the Americas, of a new continent truly open to whatever diverse and eccentric groups showed up. The United States was once, almost by definition, a place without a dominant national identity. As it revolutionizes television, Netflix is merely helping to return us to that past.

Tim Wu, a New Republic contributing editor, is a professor at Columbia Law School, and the author, most recently, of The Master Switch: The Rise and Fall of Information Empires.

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

Advanced ‘artificial skin’ senses touch, humidity, and temperature

Technion-Israel Institute of Technology scientists have discovered how to make a new kind of flexible sensor that one day could be integrated into “electronic skin” (e-skin) — a covering for prosthetic limbs that would allow patients to feel touch, humidity, and temperature.

Current kinds of e-skin detect only touch, but the Technion team’s invention “can simultaneously sense touch (pressure), humidity, and temperature, as real skin can do,” says research team leader Professor Hossam Haick.

Additionally, the new system “is at least 10 times more sensitive in touch than the currently existing touch-based e-skin systems.”

Researchers have long been interested in flexible sensors, but have had trouble adapting them for real-world use Haick says. A flexible sensor would have to run on low voltage (so it would be compatible with the batteries in today’s portable devices), measure a wide range of pressures, and make more than one measurement at a time, including humidity, temperature, pressure, and the presence of chemicals. These sensors would also have to be able to be manufactured quickly, easily, and cheaply.

The Technion team’s sensor has all of these qualities, Haick says. The secret: monolayer-capped gold nanoparticles that are only 5–8 nanometers in diameter, surrounded by connector molecules called ligands.

“Monolayer-capped nanoparticles can be thought of as flowers, where the center of the flower is the gold or metal nanoparticle and the petals are the monolayer of organic ligands that generally protect it,” says Haick.

The team discovered that when these nanoparticles are laid on top of a substrate — in this case, made of PET (flexible polyethylene terephthalate), the same plastic found in soda bottles — the resulting compound conducted electricity differently depending on how the substrate was bent.

The bending motion brings some particles closer to others, increasing how quickly electrons can pass between them. This electrical property means that the sensor can detect a large range of pressures, from tens of milligrams to tens of grams.

And by varying how thick the substrate is, as well as what it is made of, scientists can modify how sensitive the sensor is. Because these sensors can be customized, they could in the future perform a variety of other tasks, including monitoring strain on bridges and detecting cracks in engines.

“The sensor is very stable and can be attached to any surface shape while keeping the function stable,” says Dr. Nir Peled, Head of the Thoracic Cancer Research and Detection Center at Israel’s Sheba Medical Center, who was not involved in the research.

Meital Segev-Bar et al., Tunable Touch Sensor and Combined Sensing Platform: Toward Nanoparticle-based Electronic Skin, ACS Applied Materials & Interfaces, 2013, DOI: 10.1021/am400757q

http://www.kurzweilai.net/advanced-artificial-skin-senses-touch-humidity-and-temperature

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

What 3 decades of research tells us about whether brutally violent video games lead to mass shootings

It was one of the most brutal video games imaginable—players used cars to murder people in broad daylight. Parents were outraged, and behavioral experts warned of real-world carnage. “In this game a player takes the first step to creating violence,” a psychologist from the National Safety Council told the New York Times. “And I shudder to think what will come next if this is encouraged. It’ll be pretty gory.”

To earn points, Death Race encouraged players to mow down pedestrians. Given that it was 1976, those pedestrians were little pixel-gremlins in a 2-D black-and-white universe that bore almost no recognizable likeness to real people.

Indeed, the debate about whether violent video games lead to violent acts by those who play them goes way back. The public reaction to Death Race can be seen as an early predecessor to the controversial Grand Theft Auto three decades later and the many other graphically violent and hyper-real games of today, including the slew of new titles debuting at the E3 gaming summit this week in Los Angeles.

In the wake of the Newtown massacre and numerous other recent mass shootings, familiar condemnations of and questions about these games have reemerged. Here are some answers.

Who’s claiming video games cause violence in the real world?

Though conservatives tend to raise it more frequently, this bogeyman plays across the political spectrum, with regular calls for more research, more regulations, and more censorship. The tragedy in Newtown set off a fresh wave:

Donald Trump tweeted: “Video game violence & glorification must be stopped—it’s creating monsters!” Ralph Nader likened violent video games to “electronic child molesters.” (His outlandish rhetoric was meant to suggest that parents need to be involved in the media their kids consume.) MSNBC’s Joe Scarborough asserted that the government has a right to regulate video games, despite a Supreme Court ruling to the contrary.

Unsurprisingly, the most over-the-top talk came from the National Rifle Association:

“Guns don’t kill people. Video games, the media, and Obama’s budget kill people,” NRA Executive Vice President Wayne LaPierre said at a press conference one week after the mass shooting at Sandy Hook Elementary. He continued without irony: “There exists in this country, sadly, a callous, corrupt and corrupting shadow industry that sells and stows violence against its own people through vicious, violent video games with names like Bulletstorm, Grand Theft Auto, Mortal Kombat, and Splatterhouse.”

Has the rhetoric led to any government action?

Yes. Amid a flurry of broader legislative activity on gun violence since Newtown there have been proposals specifically focused on video games. Among them:

State Rep. Diane Franklin, a Republican in Missouri, sponsored a state bill that would impose a 1 percent tax on violent games, the revenues of which would go toward “the treatment of mental-health conditions associated with exposure to violent video games.” (The bill has since been withdrawn.) Vice President Joe Biden has also promoted this idea.

Rep. Jim Matheson (D-Utah) proposed a federal bill that would give the Entertainment Software Rating Board’s ratings system the weight of the law, making it illegal to sell Mature-rated games to minors, something Gov. Chris Christie (R-N.J.) has also proposed for his home state.

A bill introduced in the Senate by Sen. Jay Rockefeller (D-W.Va.) proposed studying the impact of violent video games on children.

So who actually plays these games and how popular are they?

While many of the top selling games in history have been various Mario and Pokemon titles, games from the the first-person-shooter genre, which appeal in particular to teen boys and young men, are also huge sellers.

The new king of the hill is Activision’s Call of Duty: Black Ops II, which surpassed Wii Play as the No. 1 grossing game in 2012. Call of Duty is now one of the most successful franchises in video game history, topping charts year over year and boasting around 40 million active monthly users playing one of the franchise’s games over the internet. (Which doesn’t even include people playing the game offline.) There is already much anticipation for the release later this year of Call of Duty: Ghosts.

The Battlefield games from Electronic Arts also sell millions of units with each release. Irrational Games’ BioShock Infinite, released in March, has sold nearly 4 million units and is one of the most violent games to date.

What research has been done on the link between video games and violence, and what does it really tell us?

Studies on how violent video games affect behavior date to the mid 1980s, with conflicting results. Since then there have been at least two dozen studies conducted on the subject.

“Video Games, Television, and Aggression in Teenagers,” published by the University of Georgia in 1984, found that playing arcade games was linked to increases in physical aggression. But a study published a year later by the Albert Einstein College of Medicine, “Personality, Psychopathology, and Developmental Issues in Male Adolescent Video Game Use,” found that arcade games have a “calming effect” and that boys use them to blow off steam. Both studies relied on surveys and interviews asking boys and young men about their media consumption.

Studies grew more sophisticated over the years, but their findings continued to point in different directions. A 2011 study found that people who had played competitive games, regardless of whether they were violent or not, exhibited increased aggression. In 2012, a different study found that cooperative playing in the graphically violent Halo II made the test subjects more cooperative even outside of video game playing.

Metastudies—comparing the results and the methodologies of prior research on the subject—have also been problematic. One published in 2010 by the American Psychological Association, analyzing data from multiple studies and more than 130,000 subjects, concluded that “violent video games increase aggressive thoughts, angry feelings, and aggressive behaviors and decrease empathic feelings and pro-social behaviors.” But results from another metastudy showed that most studies of violent video games over the years suffered from publication biases that tilted the results toward foregone correlative conclusions.

Why is it so hard to get good research on this subject?

“I think that the discussion of media forms—particularly games—as some kind of serious social problem is often an attempt to kind of corral and solve what is a much broader social issue,” says Carly Kocurek, a professor of Digital Humanities at the Illinois Institute of Technology. “Games aren’t developed in a vacuum, and they reflect the cultural milieu that produces them. So of course we have violent games.”

There is also the fundamental problem of measuring violent outcomes ethically and effectively.

“I think anybody who tells you that there’s any kind of consistency to the aggression research is lying to you,” Christopher J. Ferguson, associate professor of psychology and criminal justice at Texas A&M International University, told Kotaku. “There’s no consistency in the aggression literature, and my impression is that at this point it is not strong enough to draw any kind of causal, or even really correlational links between video game violence and aggression, no matter how weakly we may define aggression.”

Moreover, determining why somebody carries out a violent act like a school shooting can be very complex; underlying mental-health issues are almost always present. More than half of mass shooters over the last 30 years had mental-health problems.

But America’s consumption of violent video games must help explain our inordinate rate of gun violence, right?

Actually, no. A look at global video game spending per capita in relation to gun death statistics reveals that gun deaths in the United States far outpace those in other countries—including countries with higher per capita video game spending.

A 10-country comparison from the Washington Post shows the United States as the clear outlier in this regard. Countries with the highest per capita spending on video games, such as the Netherlands and South Korea, are among the safest countries in the world when it comes to guns. In other words, America plays about the same number of violent video games per capita as the rest of the industrialized world, despite that we far outpace every other nation in terms of gun deaths.

Or, consider it this way: With violent video game sales almost always at the top of the charts, why do so few gamers turn into homicidal shooters? In fact, the number of violent youth offenders in the United States fell by more than half between 1994 and 2010—while video game sales more than doubled since 1996. A working paper from economists on violence and video game sales published in 2011 found that higher rates of violent video game sales in fact correlated with a decrease in crimes, especially violent crimes.

I’m still not convinced. A bunch of mass shooters were gamers, right?

Some mass shooters over the last couple of decades have had a history with violent video games. The Newtown shooter, Adam Lanza, was reportedly “obsessed” with video games. Norway shooter Anders Behring Breivik was said to have played World of Warcraft for 16 hours a day until he gave up the game in favor of Call of Duty: Modern Warfare, which he claimed he used to train with a rifle. Aurora theater shooter James Holmes was reportedly a fan of violent video games and movies such as The Dark Knight. (Holmes reportedly went so far as to mimic the Joker by dying his hair prior to carrying out his attack.)

Jerald Block, a researcher and psychiatrist in Portland, Oregon, stirred controversy when he concluded that Columbine shooters Eric Harris and Dylan Klebold carried out their rampage after their parents took away their video games. According to the Denver Post, Block said that the two had relied on the virtual world of computer games to express their rage, and that cutting them off in 1998 had sent them into crisis.

But that’s clearly an oversimplification. The age and gender of many mass shooters, including Columbine’s, places them right in the target demographic for first-person-shooter (and most other) video games. And people between ages 18 and 25 also tend to report the highest rates of mental-health issues. Harris and Klebold’s complex mental-health problems have been well documented.

To hold up a few sensational examples as causal evidence between violent games and violent acts ignores the millions of other young men and women who play violent video games and never go on a shooting spree in real life. Furthermore, it’s very difficult to determine empirically whether violent kids are simply drawn to violent forms of entertainment, or if the entertainment somehow makes them violent. Without solid scientific data to go on, it’s easier to draw conclusions that confirm our own biases.

How is the industry reacting to the latest outcry over violent games?

Moral panic over the effects of violent video games on young people has had an impact on the industry over the years, says Kocurek, noting that “public and government pressure has driven the industry’s efforts to self regulate.”

In fact, it is among the best when it comes to abiding by its own voluntary ratings system, with self-regulated retail sales of Mature-rated games to minors lower than in any other entertainment field.

But is that enough? Even conservative judges think there should be stronger laws regulating these games, right?

There have been two major Supreme Court cases involving video games and attempts by the state to regulate access to video games. Aladdin’s Castle, Inc. v. City of Mesquite in 1983 and Brown v. Entertainment Merchants Association in 2011.

“Both cases addressed attempts to regulate youth access to games, and in both cases, the court held that youth access can’t be curtailed,” Kocurek says.

In Brown v. EMA, the Supreme Court found that the research simply wasn’t compelling enough to spark government action, and that video games, like books and film, were protected by the First Amendment.

“Parents who care about the matter can readily evaluate the games their children bring home,” Justice Antonin Scalia wrote when the Supreme Court deemed California’s video game censorship bill unconstitutional in Brown v. EMA. “Filling the remaining modest gap in concerned-parents’ control can hardly be a compelling state interest.”

So how can we explain the violent acts of some kids who play these games?

For her part, Kocurek wonders if the focus on video games is mostly a distraction from more important issues. “When we talk about violent games,” she says, “we are too often talking about something else and looking for a scapegoat.”

In other words, violent video games are an easy thing to blame for a more complex problem. Public policy debates, she says, need to focus on serious research into the myriad factors that may contribute to gun violence. This may include video games—but a serious debate needs to look at the dearth of mental-health care in America, our abundance of easily accessible weapons, our highly flawed background-check system, and other factors.

There is at least one practical approach to violent video games, however, that most people would agree on: Parents should think deliberately about purchasing these games for their kids. Better still, they should be involved in the games their kids play as much as possible so that they can know firsthand whether the actions and images they’re allowing their children to consume are appropriate or not.

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

Why music makes our brain sing

By ROBERT J. ZATORRE and VALORIE N. SALIMPOOR

Published: June 7, 2013

Music is not tangible. You can’t eat it, drink it or mate with it. It doesn’t protect against the rain, wind or cold. It doesn’t vanquish predators or mend broken bones. And yet humans have always prized music — or well beyond prized, loved it.

In the modern age we spend great sums of money to attend concerts, download music files, play instruments and listen to our favorite artists whether we’re in a subway or salon. But even in Paleolithic times, people invested significant time and effort to create music, as the discovery of flutes carved from animal bones would suggest.

So why does this thingless “thing” — at its core, a mere sequence of sounds — hold such potentially enormous intrinsic value?

The quick and easy explanation is that music brings a unique pleasure to humans. Of course, that still leaves the question of why. But for that, neuroscience is starting to provide some answers.

More than a decade ago, our research team used brain imaging to show that music that people described as highly emotional engaged the reward system deep in their brains — activating subcortical nuclei known to be important in reward, motivation and emotion. Subsequently we found that listening to what might be called “peak emotional moments” in music — that moment when you feel a “chill” of pleasure to a musical passage — causes the release of the neurotransmitter dopamine, an essential signaling molecule in the brain.

When pleasurable music is heard, dopamine is released in the striatum — an ancient part of the brain found in other vertebrates as well — which is known to respond to naturally rewarding stimuli like food and sex and which is artificially targeted by drugs like cocaine and amphetamine.

But what may be most interesting here is when this neurotransmitter is released: not only when the music rises to a peak emotional moment, but also several seconds before, during what we might call the anticipation phase.

The idea that reward is partly related to anticipation (or the prediction of a desired outcome) has a long history in neuroscience. Making good predictions about the outcome of one’s actions would seem to be essential in the context of survival, after all. And dopamine neurons, both in humans and other animals, play a role in recording which of our predictions turn out to be correct.

To dig deeper into how music engages the brain’s reward system, we designed a study to mimic online music purchasing. Our goal was to determine what goes on in the brain when someone hears a new piece of music and decides he likes it enough to buy it.

We used music-recommendation programs to customize the selections to our listeners’ preferences, which turned out to be indie and electronic music, matching Montreal’s hip music scene. And we found that neural activity within the striatum — the reward-related structure — was directly proportional to the amount of money people were willing to spend.

But more interesting still was the cross talk between this structure and the auditory cortex, which also increased for songs that were ultimately purchased compared with those that were not.

Why the auditory cortex? Some 50 years ago, Wilder Penfield, the famed neurosurgeon and the founder of the Montreal Neurological Institute, reported that when neurosurgical patients received electrical stimulation to the auditory cortex while they were awake, they would sometimes report hearing music. Dr. Penfield’s observations, along with those of many others, suggest that musical information is likely to be represented in these brain regions.

The auditory cortex is also active when we imagine a tune: think of the first four notes of Beethoven’s Fifth Symphony — your cortex is abuzz! This ability allows us not only to experience music even when it’s physically absent, but also to invent new compositions and to reimagine how a piece might sound with a different tempo or instrumentation.

We also know that these areas of the brain encode the abstract relationships between sounds — for instance, the particular sound pattern that makes a major chord major, regardless of the key or instrument. Other studies show distinctive neural responses from similar regions when there is an unexpected break in a repetitive pattern of sounds, or in a chord progression. This is akin to what happens if you hear someone play a wrong note — easily noticeable even in an unfamiliar piece of music.

These cortical circuits allow us to make predictions about coming events on the basis of past events. They are thought to accumulate musical information over our lifetime, creating templates of the statistical regularities that are present in the music of our culture and enabling us to understand the music we hear in relation to our stored mental representations of the music we’ve heard.

So each act of listening to music may be thought of as both recapitulating the past and predicting the future. When we listen to music, these brain networks actively create expectations based on our stored knowledge.

Composers and performers intuitively understand this: they manipulate these prediction mechanisms to give us what we want — or to surprise us, perhaps even with something better.

In the cross talk between our cortical systems, which analyze patterns and yield expectations, and our ancient reward and motivational systems, may lie the answer to the question: does a particular piece of music move us?

When that answer is yes, there is little — in those moments of listening, at least — that we value more.

Robert J. Zatorre is a professor of neuroscience at the Montreal Neurological Institute and Hospital at McGill University. Valorie N. Salimpoor is a postdoctoral neuroscientist at the Baycrest Health Sciences’ Rotman Research Institute in Toronto.

Thanks to S.R.W. for bringing this to the attention of the It’s Interesting community.

How Mom Julie Deane used Google to build a global fashion brand

Julie Deane founded Cambridge Satchel Company in her kitchen in 2008, with her mom at her side. “It’s one of those business that was started out of necessity because I needed to make school fees for my daughter, who was being bullied at school,” says Deane. “I made a promise to her that I would move her to a place that she could be really safe and happy. Once you make a promise to your children, there’s no going back.”

So she developed a few ideas for companies she could start with a budget of £600, one of which was a satchel company. Now, people scoff at the idea of starting a company on such a shoestring budget, but Deane maintains, to this day, that “it seems a perfectly reasonable amount to give something a go with,” and that you can get a sense of whether something has legs or not without getting in too deep.

Once she decided on satchels, Deane sat down at her kitchen table and started Googling handbag manufacturers, leather goods manufacturers, leather suppliers, saddle makers and anyone who would cut leather and make things from scratch for her. She “hammered” the Internet to make sure nobody else was doing it — a process she now knows is actually called competitor analysis. When she determined that her venture was unique, she’d drive to the tanneries and manufacturers to get them on board.

She found a leather supplier and got to work on a prototype. With a product in the works, she then set out to build a website. “I didn’t know very much about websites at all,” says Deane. “I thought, ‘It can’t be that hard, there must be a course on the Internet.'” She found one, and she spent two nights taking a course and then made the website on the third night.

“At this point, most people have access to the Internet, and it’s all there,” says Deane, who now speaks to students about entrepreneurship and bootstrapping. “I tell them, ‘You don’t need to pay someone else to do your web design and SEO and AdWords campaign’ … it’s lazy.”

Deane put herself on every free online listing she could — from the Yellow Pages and mom blogs to Etsy and eBay. “I read this book called Guerilla Marketing, and it says you need to try multiple avenues of marketing, and if people see your name enough times, then they’ll get curious enough to look you up,” she says.

As she started selling chestnut, dark brown and black satchels online, Deane engaged her customers. She’d ask them to send a photo of them with their satchel and to write a testimonial for the site if they really loved the bag (and to send it back if they didn’t), thinking a solid review could lend her fledgling business credibility and encourage other people to buy. A few of Deane’s early customers mentioned their love for fashion blogs, which opened a world of opportunity to Deane.

“I couldn’t believe how frequently people would be checking these fashion blogs!” Deane says. Whenever a customer mentioned a go-to fashion blog, Deane sent the blogger an email, telling them about Cambridge Satchels and sending a photo, in hopes of a shout-out. She would tell them, “I can’t send you free samples — maybe in a year’s time if it goes well, you can have one, but in the meantime, here’s a photograph!”

When the leather supplier worked on a project with red and navy, Deane had some colored satchels made. She had refrained from bespoke colors before, since she minimum order would be six month’s worth of sales — “If I’d picked a dud of a color, that would not have been good news for the school fees!” Deane says the red and navy brought about a “lightbulb moment” for her. “The minute I had red and navy, those really took off, and it became very, very clear that the way forward would be through different colors.”

Over the years, Deane aggressively worked with fashion bloggers and prominent fashionistas, sponsoring giveaways and gifting satchels, which yielded organic buzz. Over the years, Cambridge Satchel built strong relationships with these bloggers — even asking them what color satchels the company should make next — and these relationships enabled the brand to skirt traditional advertising. Fully embraced as a fashion obsession, Cambridge Satchels grew thanks to social media and word of mouth, especially via blogs.

In September 2011, Elle UK reached out, inquiring whether Deane could produce a brighter satchel for inclusion in an upcoming fluorescent trend piece. Always one to capitalize on a new opportunity, Deane produced these satchels and sent them to bloggers who were attending New York Fashion Week. “When the lights at the shows went down and the people started taking flash photography, the satchels really popped,” says Deane. “So we got noticed, and the New York Times and the New York Post called us the ‘street style of New York Fashion Week.'” (And of course, the fashion bloggers gave Cambridge Satchel widespread press online, too.)

The success of Fashion Week in 2011 beckoned Bloomingdales and Saks to bring the satchels to Stateside, where they were put in the window and dubbed “The Brit It Bag” during Fashion Week in February 2012. “That was a big moment for us, when we really got noticed,” says Deane.

This new trend didn’t go unnoticed. Just a few months after Fashion Week, ad agency BBH contacted Deane. They asked for facts and figures about the business and the whole story of how she started it, because Cambridge Satchel was being pitched as part of a large media opportunity. “I had no idea what they were talking about, or who they were talking to, so it was making me feel really worried!” recalls Deane. After signing a large NDA, Deane found out the client was Google, and it was looking to do commercials for its “the web is what you make of it” campaign. Cambridge Satchel was a perfect fit, and after Deane went to London with meet with the client, she had herself a high-profile television spot.

“Julie saw the Internet as a key to her success,” says Rich Pleeth, Consumer Marketing Manager at Google. “That’s why we built Chrome — to provide people like her and businesses like this with the richest and best web experience across all devices, combining speed, simplicity and security.”

Deane had built great momentum for her burgeoning business through the web, and more specifically, Google. “Right from the moment of trying to source everything from my first suppliers — thread suppliers and rivet suppliers and property for the factory — up through the AdWords and the analytics and Google Translate (for foreign emails), we do use all of those things, so to be able to be part of something that’s a very honest version of exactly how Cambridge Satchel started, I was very comfortable with it, it wasn’t a stretch at all,” explains Deane.

The ad ran in the UK and Ireland in late September and early October, then again from December 17 through 31.

“I’ve gotten so many fantastic emails about it,” says Deane. But more than that, she’s seen traffic and sales increase exponentially thanks to the ad, which ran on TV but also has surpassed 5.3 million views by YouTube’s global audience.

While it’s hard to pin down exactly how much web traffic and sales are attributable to the Chrome ad, Deane — being a numbers junkie — has tried quantifying the ad’s effect. Total sales from September through December 2012 more than doubled over 2011. But in the UK alone, sales increased a whopping 400%, and the commercial has to be responsible for some of that lift. And if you look at the Google Analytics reports of Cambridge Satchel’s web traffic on an hourly basis, there’s a big spike every time a commercial airs.

As if Google hadn’t been generous enough, the company incorporated Cambridge Satchel into a live experiment during IAB Engage 2012, when Mark Howe, Managing Director of Agency Operations Europe at Google, did a Hangout with Deane, who had Google pros at her office boosting her SEO, improving analytics, setting up a branded YouTube page, optimizing her site for mobile and upping her social media strategy.

“It has been proven that companies which build their business online grow their business at four to eight times faster than those that don’t,” says Pleeth. “The Internet has given them a global reach they would never have otherwise.”

Today, Cambridge Satchels are sold in 190 outposts in 100 countries, and the company does more than £8 million in annual sales. And all Julie Deane set out to do with £600 was make enough money to cover her daughter’s tuition.

During London Fashion Week, Deane opened her first brick-and-mortar location in London’s Covent Garden. It’s a two-story space, and the entire lower level is devoted to the web-savvy cohort who made her famous — fashion bloggers.

“I really feel very strongly that the bloggers are the people who started my business,” says Deane. “They’re a group of people who don’t have offices, and you’ll see them at New York and London Fashion Week sitting in Starbucks writing their pieces.” But now, at least in London, these bloggers will have a home base where they can write stories, make a cup of tea, browse the web, socialize and, of course, charge their phone so they can tweet and Instagram the next big thing. “I’m really excited about the lounge because it feels like a tangible way to thank the community that has helped me so much,” says Deane.

Lessons Learned From the Julie Deane School of Entrepreneurship

•Take risks.

•Be resourceful — DIY as much as possible.

•Know your audience, how they behave and where they spend their time.

•Don’t give your product away or sell it short, but strategic gifting can go a long way.

•Seize opportunities.

•Engage your fans, offer them a stake in your company.

•Find valuable brand partners with whom to run competitions and giveaways.

•Be authentic — Julie tweets about her dog, Rupert, which humanizes the brand.

•Embrace the web and the platforms that live on it.

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

Stockbroker Shaun “English Shaun” Attwood: “I used my stock market millions to throw raves and sell drugs.”

Think of drug lords in America, and it’s likely that you’ll think of emotionally erratic men with harems of coked-up megababes on big yachts in Miami. Or, if you watch a lot of DVD box sets, terminally ill chemistry teachers or Idris Elba. One cliche that probably won’t spring to mind is a polite, educated ex-stockbroker from the UK’s industrial Cheshire.

Shaun “English Shaun” Attwood is an incredibly unlikely ecstasy kingpin. Growing up in Widnes, just outside of Liverpool, Shaun invested in the US stock market when he was young, made his millions, moved to Phoenix, Arizona, started throwing raves, and became a major drug supplier. While he wasn’t planning parties in the deserts of Arizona, he was working in direct competition with the Italian Mafia and alongside the New Mexican Mafia to supply millions of dollars’ worth of ecstasy to the ravers of mid-90s Phoenix.

His motivation for doing all this, besides the fact that partying for a living is a lot more fun than selling shares? He wanted to introduce Americans to the British rave culture he’d grown up with. Unfortunately, as is often the way when you’re handling millions of dollars of narcotics, Shaun was caught and ended up being sent to Maricopa County Jail, widely regarded as America’s toughest prison. Shaun’s been out of jail for a few years, so I called him to see if he’s still so keen to spread the love to the Yanks.

VICE: So you went from being a millionaire stockbroker to becoming a major drug dealer in Arizona. How did that happen?

Shaun Attwood: The Manchester rave scene made such a big impression on me that I decided to transfer that scene to Phoenix, Arizona, after moving over there. After becoming a millionaire as a young person, I had more money than common sense, so I didn’t see the law as an obstacle to my partying or a barrier to bringing tens of thousands of hits of ecstasy into America from Holland.

That was for the Mafia, right? Yeah, I was supplying ecstasy to the New Mexican Mafia. In the beginning, I didn’t know who they were, but it came about because I was a friend of a gang member’s brother. Years later, they were all arrested and the news headlines reported that they were the most powerful and violent Mafia in Arizona at that time, committing murder-for-hire and executing witnesses.

And you were in direct competition with the Italian Mafia member Sammy “The Bull” Gravano—what’s the story with that? Yeah. Years later in prison, his son, Gerard Gravano, told me that he’d been dispatched as the head of an armed crew to kidnap me from a nightclub and take me out to the desert. I’d avoided him that night because my best friend, Wild Man, had got in a fight, and we’d had to leave the club in a hurry.

Talking of clubs, how did the rave scene in America compare with the raves in England at the time? Oh, it was small at first. It took years to catch up. Ecstasy was very expensive—it was $30 a hit in the mid-90s.

Where did you put on your parties? The first one was in a warehouse in west Phoenix owned by the Mexican Mafia, but they were at various different locations after that.

Did selling drugs come after you started putting on raves? It seems like throwing raves is the perfect way of creating a solid customer base. Well, I was selling ecstasy before I started the raves, but they obviously provided more of a market.

How did you find it going from a high-pressure, high-income job to dealing and partying all the time? I enjoyed it at first. There was one rave where I booked Chris Liberator and Dave the Drummer. I remember hearing Chris Liberator’s beats and being mesmerized by the sight of thousands of people dancing to English DJs with the same blissful expressions that I had on my face when I first got into raving. I thought, This is it, I’ve realized my dream. But I started taking too many drugs and got incredibly paranoid because of all the risks I was taking.

That sounds like a familiar story. Did the police get on to you? It was inevitable. Dealing drugs leads to police trouble, prison, or death. I sowed the seeds of my downfall and take full responsibility for landing myself behind bars. Informant statements led to a wiretap, and 10,000 calls were recorded. I rarely spoke on the phone, but they caught me talking about personal use and many of my employees were referencing my name on the phone, which resulted in a conspiracy charge.

What did you make of the media dubbing your organization the “Evil Empire” when you were caught? Did you think it was a bit over the top? My heart went “badum, badum, badum” when I saw the cover of the Phoenix New Times with a portrait of me as Nosferatu on it, which was where they called it the “Evil Empire.” And the cover also had four of my co-defendants, including Wild Man and my head of security, Cody, in the foreground, with my arms encircling them like some evil puppeteer. I couldn’t believe it.

Was this when you were locked up already? It was before I’d been sentenced, and I was worried that there was going to be something in there that might damage my case. I read in there that the prosecutor had classified me as a serious drug offender likely to receive a life sentence, and I went into shock. I’d thought I was getting out, but I was now facing 25 years. If I’d got a life sentence I would have been 58 when I got out, basically at retirement age. But yeah, when I read the article, I felt like some arch-villain from the Marvel comics I collected as a child.

What was your time inside like? Early on, I was with lots of people who were arrested with me, including my large and fearless best friend and raving partner from my hometown of Widnes, Wild Man, who the gangs respected for his fighting skills. He looked out for me. I was split up from my co-defendants after the first year, so then I had to rely on my people skills, Englishness, education, etc., etc.

You wrote blogs inside as well, right? Yeah, that enabled me to make some powerful alliances with characters like T-Bone and Two Tonys, who was a Mafia mass murderer and was serving multiple life sentences. T-Bone was a deeply spiritual, massively built African American who towered over most inmates. He was a prison gladiator and covered in stab wounds. He was a good man to have on your side.

You’ve told me before about the trouble you had with the Aryan Brotherhood as well. Yeah, all the way through my incarceration, I was trying to dodge Aryan Brotherhood predators. They run the white race in the prison system. You have to do what they say or else you get smashed or murdered.

So I take it racism featured quite heavily in the prison that you were in? Yeah, it was completely racially segregated. The way it works there is that, as soon as you walk in, a soldier from your racial gang tells you the rules that are enforced by the head of each race. Disobedience means that you get smashed, shanked or murdered. The rules include stuff like not being able to sit with the other races at the dinner tables or exercise with the other races. But when it comes to drugs, the gangs all deal with each other regardless of race.

I bet there were some pretty nice characters in that environment. God, it was full of scary people. In super maximum security, I lived next door to a serial killer and my first cell mate was a satanic priest with a pentagram tattooed on his head. He was in for murder and was part of a cult that was drinking blood and eating human body parts. Fortunately, he was quite nice to me.

That’s good. So what are you doing with your life now? Are you a reformed character? Yeah, and I credit incarceration with sending my life in a whole new positive direction. I tell my story to schools across the UK and Europe to educate young people about the consequences of choosing the drugs lifestyle in the hope that they don’t make the same mistakes I did. The endless feedback that I get from students makes me feel that the talks are a better way of repaying my debt to society than the sentence I served.

Shaun has written two memoirs, Hard Time and Party Time.

http://www.vice.com/read/i-gave-up-stocks-to-throw-raves-and-sell-drugs

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

Origin of the myth that we only use 10% of our brains

The human brain is complex. Along with performing millions of mundane acts, it composes concertos, issues manifestos and comes up with elegant solutions to equations. It’s the wellspring of all human feelings, behaviors, experiences as well as the repository of memory and self-awareness. So it’s no surprise that the brain remains a mystery unto itself.

Adding to that mystery is the contention that humans “only” employ 10 percent of their brain. If only regular folk could tap that other 90 percent, they too could become savants who remember π to the twenty-thousandth decimal place or perhaps even have telekinetic powers.

Though an alluring idea, the “10 percent myth” is so wrong it is almost laughable, says neurologist Barry Gordon at Johns Hopkins School of Medicine in Baltimore. Although there’s no definitive culprit to pin the blame on for starting this legend, the notion has been linked to the American psychologist and author William James, who argued in The Energies of Men that “We are making use of only a small part of our possible mental and physical resources.” It’s also been associated with to Albert Einstein, who supposedly used it to explain his cosmic towering intellect.

The myth’s durability, Gordon says, stems from people’s conceptions about their own brains: they see their own shortcomings as evidence of the existence of untapped gray matter. This is a false assumption. What is correct, however, is that at certain moments in anyone’s life, such as when we are simply at rest and thinking, we may be using only 10 percent of our brains.

“It turns out though, that we use virtually every part of the brain, and that [most of] the brain is active almost all the time,” Gordon adds. “Let’s put it this way: the brain represents three percent of the body’s weight and uses 20 percent of the body’s energy.”

The average human brain weighs about three pounds and comprises the hefty cerebrum, which is the largest portion and performs all higher cognitive functions; the cerebellum, responsible for motor functions, such as the coordination of movement and balance; and the brain stem, dedicated to involuntary functions like breathing. The majority of the energy consumed by the brain powers the rapid firing of millions of neurons communicating with each other. Scientists think it is such neuronal firing and connecting that gives rise to all of the brain’s higher functions. The rest of its energy is used for controlling other activities—both unconscious activities, such as heart rate, and conscious ones, such as driving a car.

Although it’s true that at any given moment all of the brain’s regions are not concurrently firing, brain researchers using imaging technology have shown that, like the body’s muscles, most are continually active over a 24-hour period. “Evidence would show over a day you use 100 percent of the brain,” says John Henley, a neurologist at the Mayo Clinic in Rochester, Minn. Even in sleep, areas such as the frontal cortex, which controls things like higher level thinking and self-awareness, or the somatosensory areas, which help people sense their surroundings, are active, Henley explains.

Take the simple act of pouring coffee in the morning: In walking toward the coffeepot, reaching for it, pouring the brew into the mug, even leaving extra room for cream, the occipital and parietal lobes, motor sensory and sensory motor cortices, basal ganglia, cerebellum and frontal lobes all activate. A lightning storm of neuronal activity occurs almost across the entire brain in the time span of a few seconds.

“This isn’t to say that if the brain were damaged that you wouldn’t be able to perform daily duties,” Henley continues. “There are people who have injured their brains or had parts of it removed who still live fairly normal lives, but that is because the brain has a way of compensating and making sure that what’s left takes over the activity.”

Being able to map the brain’s various regions and functions is part and parcel of understanding the possible side effects should a given region begin to fail. Experts know that neurons that perform similar functions tend to cluster together. For example, neurons that control the thumb’s movement are arranged next to those that control the forefinger. Thus, when undertaking brain surgery, neurosurgeons carefully avoid neural clusters related to vision, hearing and movement, enabling the brain to retain as many of its functions as possible.

What’s not understood is how clusters of neurons from the diverse regions of the brain collaborate to form consciousness. So far, there’s no evidence that there is one site for consciousness, which leads experts to believe that it is truly a collective neural effort. Another mystery hidden within our crinkled cortices is that out of all the brain’s cells, only 10 percent are neurons; the other 90 percent are glial cells, which encapsulate and support neurons, but whose function remains largely unknown. Ultimately, it’s not that we use 10 percent of our brains, merely that we only understand about 10 percent of how it functions.

http://www.scientificamerican.com/article.cfm?id=people-only-use-10-percent-of-brain&page=2

Thanks to SRW for bringing this to the attention of the It’s Interesting community.

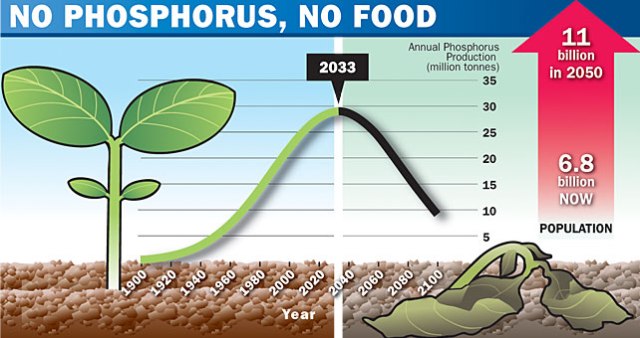

Peak Phosphorus and Food Production

Grantham thinks the number of people on Earth has finally and permanently outstripped the planet’s ability to support us.

Grantham believes that the planet can only sustainably support about 1.5 billion humans, versus the 7 billion on Earth right now (heading to 10-12 billion).

Basically, Grantham thinks most of us are going to starve to death.

Why?

In part because we’re churning through a finite supply of something that is critical to our ability to produce food: Phosphorus.

Phosphorus is a critical ingredient of fertilizer, and there is a finite supply of it. The consensus is that we will hit “peak phosphorus” production within a few decades, after which point our phosphorus supply will inexorably decline. As it declines, we will be unable to feed ourselves. And you know the rest.

Of course, ever since Malthus, a steady stream of doomsayers have predicted a ghastly end to the human population explosion–and, so far, they’ve all been wrong.

So why is a man of Grantham’s intelligence adding his voice to this chorus?

And how real is this threat? Are we all going to starve?

Humans have been around for a while. But for most of our existence, our population was small and stable. Then it exploded.

Most of this explosion has come in the past 200 years–just as Malthus predicted. What Malthus did not foresee was the discovery of oil, commercial fertilizer, and other resources, which have (temporarily) supported this population explosion.

For the past 100 years, technology has made these resources cheaper to extract and produce, which has made them ever cheaper. Grantham thinks that trend has now permanently ended.

Take oil, for example. Oil traded at about $16 a barrel for a century. Then, as demand outstripped supply, the “normal” price increased to ~$35 a barrel. Now, Grantham thinks “normal” is about ~$75 a barrel

Why are oil prices rising? Because oil demand is now growing far faster than oil supply. The world’s oil production has barely increased since the 1970s, while oil usage has exploded.

Demand is exceeding supply for other commodities, too. Like metals. Here’s a hundred-year look at the prices of Iron ore.

But the real problem is food.

Over the past century, the world has produced ever more food from the same (relatively) finite supply of arable land. For example, this chart shows global wheat production in the past 50 years. The blue line is farmland. The yellow line is total wheat production. The pink line is “yield per hectare.” Production is rising because yield is increasing.

Why are crop yields increasing? Fertilizer.

chuckoutrearseats via Flickr

In the past half-century, we have used an ever-increasing amount of fertilizer. Not just in total, but per acre. This chart, for example, shows the number of tons of fertilizer used per square kilometer of farmland.

And this leads us to the first problem. 40 years ago, the average growth rate of crop yields per acre was an impressive 3.5% per year. This was comfortably ahead of the growth rate of global population. In recent years, however, the growth in crop yields per acre has dropped to about 1.5%. That’s dangerously close to the growth of population.

That brings us to the second problem. We don’t have an infinite supply of fertilizer.

For most of human history, we used “natural fertilizer” (poop). But then we started making more powerful stuff.

Commercial fertilizer requires, among other ingredients, potassium and phosphorus. There are finite quantities of both. Phosphorus, especially, is in short supply.

Phosphorus (P) is essential for life. Plants absorb it from fertilized soil, and then animals absorb it when they eat plants (and each other). When the plants and animals excrete waste or die, the phosphorus returns to the environment. Eventually, given enough time, it gets compressed into rock at the bottom of the ocean.

Phosphate is a critical ingredient of fertilizer, and there is no substitute for it (because plants are partially made from it). This photo shows the difference between corn fertilized with phosphorus (background) and corn without.

Most of the phosphate we use in commercial fertilizer comes from phosphate rock, which was once sediment at the bottom of the ocean. This mine is located in Togo.

In the past ~120 years, we have become completely dependent on phosphate rock for phosphorus used in commercial fertilizer. Before that, our phosphate came from manure.

As the human population grows, and emerging markets get richer and need more food and animal feed, we’re consuming more and more phosphorus (red line).

The amount of phosphate rock we use, therefore, continues to climb.

The trouble is that there isn’t an infinite amount of phosphate rock. Estimates differ on the amount of reserves available in the world, but they’re not unlimited. Some scientists think we have enough to last hundreds of years. Others, however, are far less optimistic.

The consensus of many scientists is that we will hit “peak phosphorus” production in about 2030. After that, phosphorus production is expected to decline.

As phosphorus production drops, crop yields will drop. And then, the concern is, we won’t be able to grow enough food to feed ourselves.

So is that it? Are we screwed?

Not necessarily. It turns out that our urine and feces contain a lot of phosphorus–which is why they make good fertilizer. If we got serious about recycling our bio-waste, we could reduce our need for phosphate rock.

But although conservation and recycling will help, they won’t fix the problem. Because a huge amount of phosphorus will still be lost to runoff. Phosphate that isn’t consumed by plants leaches out of the soil into rivers and then to the ocean.

So, eventually, the finite supply of usable phosphorus could be a big problem.

Jeremy Grantham, by the way, thinks the finite supply of fertilizer and limits of crop yields are starting to affect food prices. Soybean prices, for example, have jumped in the last 10 years.

So have corn prices.

And wheat prices.

So, why is all this happening now, when the global population has been exploding for two centuries? The answer, in part, is the spectacular growth of China, India, and other massive countries. The resource-usage of these countries is mind-boggling. Here, for example, are Grantham’s estimates of the percentage of world consumption of various resources that are consumed by China alone.

Common sense will tell you that finite resources can’t support infinite growth. And another look at the “growth curve” of human population shows why it might be silly to dismiss Malthus, et al, as “obviously wrong.” (Maybe they were just early).

But here’s hoping science and ingenuity help us find a way to fix the problem.

Read more: http://www.businessinsider.com/peak-phosphorus-and-food-production-2012-12?op=1#ixzz2E7yHKqnu

Anti-computer virus software pioneer John McAfee wanted for murder in Belize

Police in Belize want to question U.S. anti-computer virus software pioneer John McAfee in connection with the murder of a neighbor he had been quarrelling with, but they say he remains a person of interest at this time and is not a suspect.

McAfee, who invented the anti-virus software that bears his name, has homes and businesses in Belize, and is believed to have settled in the country sometime around 2010.

“He is a person of interest at this time,” said Marco Vidal, head of Belize’s police Gang Suppression Unit. “It goes a bit beyond that, not just being a neighbor.”

Police officers were looking for the software engineer, said Miguel Segura, the assistant commissioner of police.

Asked if McAfee was a suspect, he said: “At this point, no. Our job … is to get all the evidence beyond reasonable doubt that Mr A is the one that killed Mr B.”

“He (McAfee) … can assist the investigation, so there is no arrest warrant for the fellow,” added Segura, who heads the Criminal Investigation Branch.

McAfee’s neighbor, Gregory Viant Faull, a 52-year-old American, was found on Sunday lying dead in a pool of blood after apparently being shot in the head.

McAfee has been embroiled in controversy in Belize before.

His premises were raided in May after he was accused of holding firearms, though most were found to be licensed. The final outcome of the case is pending.

McAfee also owns a security company in Belize as well as several properties and an ecological enterprise.

Reuters was unable to contact McAfee on Monday.

Segura said McAfee had been at odds with Faull for some time. He accused his neighbor of poisoning his dogs earlier this year and filed an official complaint.