With the pressure for a certain body type prevalent in the media, eating disorders are on the rise. But these diseases are not completely socially driven; researchers have uncovered important genetic and biological components as well and are now beginning to tease out the genes and pathways responsible for eating disorder predisposition and pathology.

As we enter the holiday season, shoppers will once again rush into crowded department stores searching for the perfect gift. They will be jostled and bumped, yet for the most part, remain cheerful because of the crisp air, lights, decorations, and the sound of Karen Carpenter’s contralto voice ringing out familiar carols.

While Carpenter is mainly remembered for her musical talents, unfortunately, she is also known for introducing the world to anorexia nervosa (AN), a severe life-threatening mental illness characterized by altered body image and stringent eating patterns that claimed her life just before her 33rd birthday in 1983.

Even though eating disorders (ED) carry one of the highest mortality rates of any mental illness, many researchers and clinicians still view them as socially reinforced behaviors and diagnose them based on criteria such as “inability to maintain body weight,” “undue influence of body weight or shape on self-evaluation,” and “denial of the seriousness of low body weight” (1). This way of thinking was prevalent when Michael Lutter, then an MD/PhD student at the University of Texas Southwestern Medical Center, began his psychiatry residency in an eating disorders unit. “I just remember the intense fear of eating that many patients exhibited and thought that it had to be biologically driven,” he said.

Lutter carried this impression with him when he established his own research laboratory at the University of Iowa. Although clear evidence supports the idea that EDs are biologically driven—they predominantly affect women and significantly alter energy homeostasis—a lack of well-defined animal models combined with the view that they are mainly behavioral abnormalities have hindered studies of the neurobiology of EDs. Still, Lutter is determined to find the biological roots of the disease and tease out the relationship between the psychiatric illness and metabolic disturbance using biochemistry, neuroscience, and human genetics approaches.

We’ve Only Just Begun

Like many diseases, EDs result from complex interactions between genes and environmental risk factors. They tend to run in families, but of course, for many family members, genetics and environment are similar enough that teasing apart the influences of nature and nurture is not easy. Researchers estimate that 50-80% of the predisposition for developing an ED is genetic, but preliminary genome-wide analyses and candidate gene studies failed to identify specific genes that contribute to the risk.

According to Lutter, finding ED study participants can be difficult. “People are either reluctant to participate, or they don’t see that they have a problem,” he reported. Set on finding the genetic underpinnings of EDs, his team began recruiting volunteers and found 2 families, 1 with 20 members, 10 of whom had an ED and another with 5 out of 8 members affected. Rather than doing large-scale linkage and association studies, the team decided to characterize rare single-gene mutations in these families, which led them to identify mutations in the first two genes, estrogen-related receptor α (ESRRA) and histone deacetylase 4 (HDAC4), that clearly associated with ED predisposition in 2013 (1).

“We have larger genetic studies on-going, including the collection of more families. We just happened to publish these two families first because we were able to collect enough individuals and because there is a biological connection between the two genes that we identified,” Lutter explained.

ESRRA appears to be a transcription factor upregulated by exercise and calorie restriction that plays a role in energy balance and metabolism. HDAC4, on the other hand, is a well-described histone deacteylase that has previously been implicated in locomotor activity, body weight homeostasis, and neuronal plasticity.

Using immunoprecipitation, the researchers found that ESRRA interacts with HDAC4, in both the wild type and mutant forms, and transcription assays showed that HDAC4 represses ESRRA activity. When Lutter’s team repeated the transcription assays using mutant forms of the proteins, they found that the ESRRA mutation seen in one family significantly reduced the induction of target gene transcription compared to wild type, and that the mutation in HDAC4 found in the other family increased transcriptional repression for ESRRA target genes.

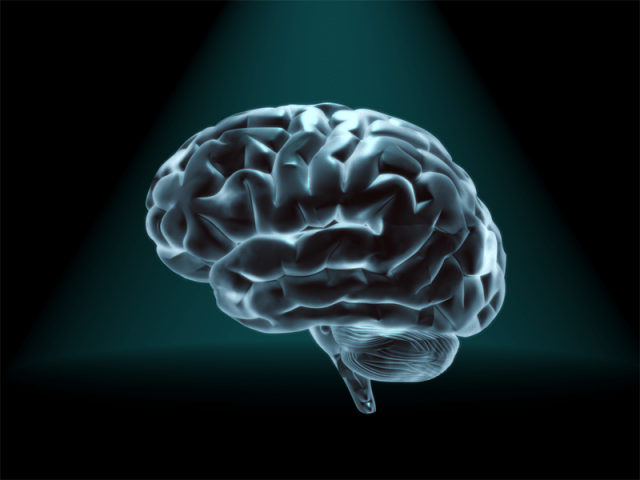

“ESRRA is a well known regulator of mitochondrial function, and there is an emerging view that mitochondria in the synapse are critical for neurotransmission,” Lutter said. “We are working on identifying target pathways now.”

Bless the Beasts and the Children

Finding genes associated with EDs provides the groundwork for molecular studies, but EDs cannot be completely explained by the actions of altered transcription factors. Individuals suffering these disorders often experience intense anxiety, intrusive thoughts, hyperactivity, and poor coping strategies that lead to rigid and ritualized behaviors and severe crippling perfectionism. They are less aware of their emotions and often try to avoid emotion altogether. To study these complex behaviors, researchers need animal models.

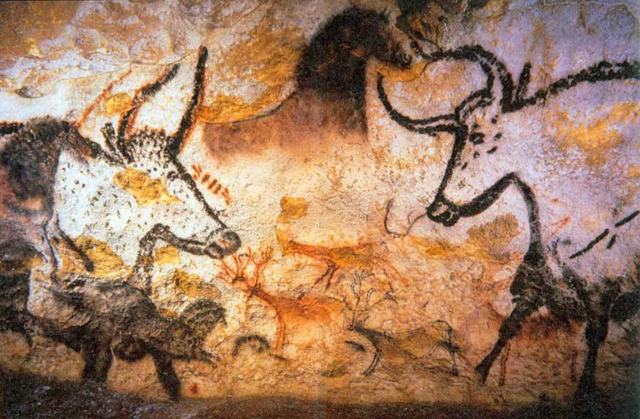

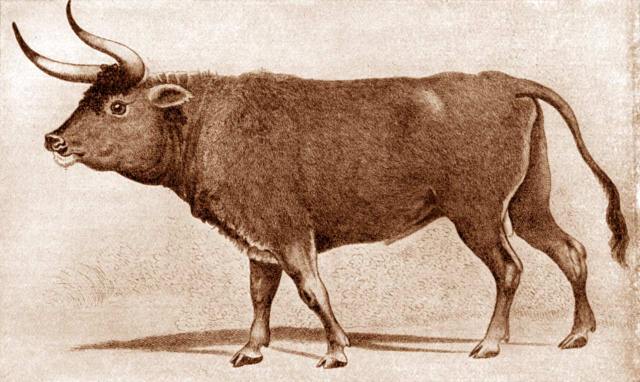

Until recently, scientists relied on mice with access to a running wheel and restricted access to food. Under these conditions, the animals quickly increase their locomotor activity and reduce eating, frequently resulting in death. While some characteristics of EDs—excessive exercise and avoiding food—can be studied in these mice, the model doesn’t allow researchers to explore how the disease actually develops. However, Lutter’s team has now introduced a promising new model (3).

Based on their previous success with identifying the involvement of ESRRA and HDAC4 in EDs, the researchers wondered if mice lacking ESRRA might make suitable models for studies on ED development. To find out, they first performed immunohistochemistry to understand more about the potential cognitive role of ESRRA.

“ESRRA is not expressed very abundantly in areas of the brain typically implicated in the regulation of food intake, which surprised us,” Lutter said. “It is expressed in many cortical regions that have been implicated in the etiology of EDs by brain imaging like the prefrontal cortex, orbitofrontal cortex, and insula. We think that it probably affects the activity of neurons that modulate food intake instead of directly affecting a core feeding circuit.”

With these data, the team next tried providing only 60% of the normal daily calories to their mice for 10 days and looked again at ESRRA expression. Interestingly, ESRRA levels increased significantly when the mice were insufficiently fed, indicating that the protein might be involved in the response to energy balance.

Lutter now believes that upregulation of ESRRA helps organisms adapt to calorie restriction, an effect possibly not happening in those with ESRRA or HDAC4 mutations. “This makes sense for the clinical situation where most individuals will be doing fine until they are challenged by something like a diet or heavy exercise for a sporting event. Once they start losing weight, they don’t adapt their behaviors to increase calorie intake and rapidly spiral into a cycle of greater and greater weight loss.”

When Lutter’s team obtained mice lacking ESRRA, they found that these animals were 15% smaller than their wild type littermates and put forth less effort to obtain food both when fed restricted calorie diets and when they had free access to food. These phenotypes were more pronounced in female mice than male mice, likely due to the role of estrogen signaling. Loss of ESRRA increased grooming behavior, obsessive marble burying, and made mice slower to abandon an escape hole after its relocation, indicating behavioral rigidity. And the mice demonstrated impaired social functioning and reduced locomotion.

Some people with AN exercise extensively, but this isn’t seen in all cases. “I would say it is controversial whether or not hyperactivity is due to a genetic predisposition (trait), secondary to starvations (state), or simply a ritual that develops to counter the anxiety of weight related obsessions. Our data would suggest that it is not due to genetic predisposition,” Lutter explained. “But I would caution against over-interpretation of mouse behavior. The locomotor activity of mice is very different from people and it’s not clear that you can directly translate the results.”

For All We Know

Going forward, Lutter’s group plans to drill down into the behavioral phenotypes seen in their ESRRA null mice. They are currently deleting ESRRA from different neuronal cell types to pair individual neurons with the behaviors they mediate in the hope of working out the neural circuits involved in ED development and pathology.

In addition, the team has created a mouse line carrying one of the HDAC4 mutations previously identified in their genetic study. So far, this mouse “has interesting parallels to the ESRRA-null mouse line,” Lutter reported.

The team continues to recruit volunteers for larger-scale genetic studies. Eventually, they plan to perform RNA-seq to identify the targets of ESRRA and HDAC4 and look into their roles in mitochondrial biogenesis in neurons. Lutter suspects that this process is a key target of ESRRA and could shed light on the cognitive differences, such as altered body image, seen in EDs. In the end, a better understanding of the cells and pathways involved with EDs could create new treatment options, reduce suffering, and maybe even avoid the premature loss of talented individuals to the effects of these disorders.

References

1. Lutter M, Croghan AE, Cui H. Escaping the Golden Cage: Animal Models of Eating Disorders in the Post-Diagnostic and Statistical Manual Era. Biol Psychiatry. 2015 Feb 12.

2. Cui H, Moore J, Ashimi SS, Mason BL, Drawbridge JN, Han S, Hing B, Matthews A, McAdams CJ, Darbro BW, Pieper AA, Waller DA, Xing C, Lutter M. Eating disorder predisposition is associated with ESRRA and HDAC4 mutations. J Clin Invest. 2013 Nov;123(11):4706-13.

3. Cui H, Lu Y, Khan MZ, Anderson RM, McDaniel L, Wilson HE, Yin TC, Radley JJ, Pieper AA, Lutter M. Behavioral disturbances in estrogen-related receptor alpha-null mice. Cell Rep. 2015 Apr 21;11(3):344-50.

http://www.biotechniques.com/news/Exploring-the-Biology-of-Eating-Disorders/biotechniques-361522.html