Frank Swain has been going deaf since his 20s. Now he has hacked his hearing so he can listen in to the data that surrounds us.

I am walking through my north London neighbourhood on an unseasonably warm day in late autumn. I can hear birds tweeting in the trees, traffic prowling the back roads, children playing in gardens and Wi-Fi leaching from their homes. Against the familiar sounds of suburban life, it is somehow incongruous and appropriate at the same time.

As I approach Turnpike Lane tube station and descend to the underground platform, I catch the now familiar gurgle of the public Wi-Fi hub, as well as the staff network beside it. On board the train, these sounds fade into silence as we burrow into the tunnels leading to central London.

I have been able to hear these fields since last week. This wasn’t the result of a sudden mutation or years of transcendental meditation, but an upgrade to my hearing aids. With a grant from Nesta, the UK innovation charity, sound artist Daniel Jones and I built Phantom Terrains, an experimental tool for making Wi-Fi fields audible.

Our modern world is suffused with data. Since radio towers began climbing over towns and cities in the early 20th century, the air has grown thick with wireless communication, the platform on which radio, television, cellphones, satellite broadcasts, Wi-Fi, GPS, remote controls and hundreds of other technologies rely. And yet, despite wireless communication becoming a ubiquitous presence in modern life, the underlying infrastructure has remained largely invisible.

Every day, we use it to read the news, chat to friends, navigate through cities, post photos to our social networks and call for help. These systems make up a huge and integral part of our lives, but the signals that support them remain intangible. If you have ever wandered in circles to find a signal for your cellphone, you will know what I mean.

Phantom Terrains opens the door to this world to a small degree by tuning into these fields. Running on a hacked iPhone, the software exploits the inbuilt Wi-Fi sensor to pick up details about nearby fields: router name, signal strength, encryption and distance. This wasn’t easy. Reams of cryptic variables and numerical values had to be decoded by changing the settings of our test router and observing the effects.

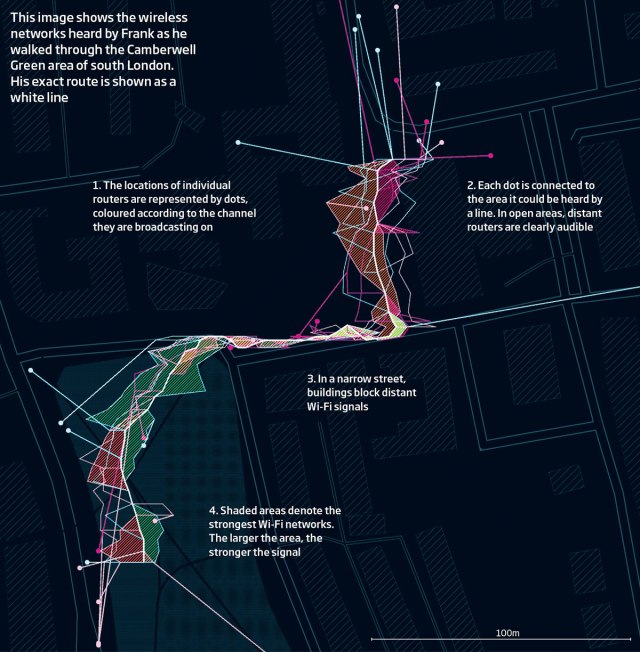

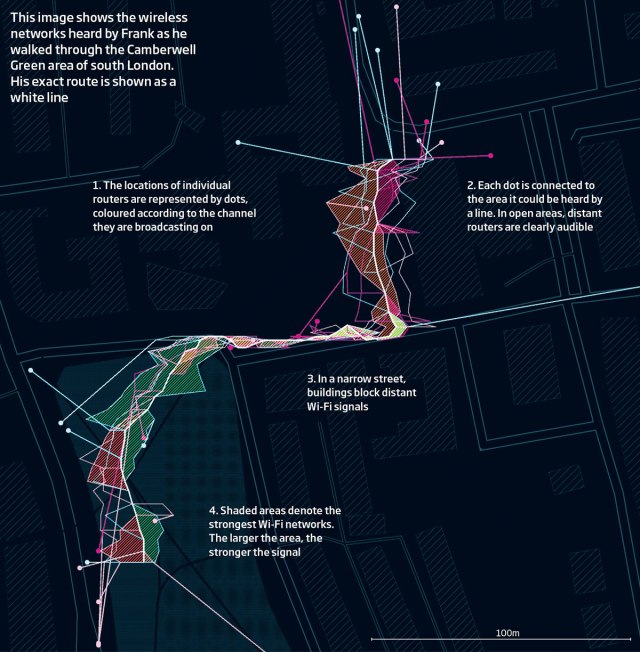

“On a busy street, we may see over a hundred independent wireless access points within signal range,” says Jones. The strength of the signal, direction, name and security level on these are translated into an audio stream made up of a foreground and background layer: distant signals click and pop like hits on a Geiger counter, while the strongest bleat their network ID in a looped melody. This audio is streamed constantly to a pair of hearing aids donated by US developer Starkey. The extra sound layer is blended with the normal output of the hearing aids; it simply becomes part of my soundscape. So long as I carry my phone with me, I will always be able to hear Wi-Fi.

Silent soundscape

From the roar of Oxford Circus, I make my way into the close silence of an anechoic booth on Harley Street. I have been spending a lot of time in these since 2012, when I was first diagnosed with hearing loss. I have been going deaf since my 20s, and two years ago I was fitted with hearing aids which instantly brought a world of missing sound back to my ears, although it took a little longer for my brain to make sense of it.

Recreating hearing is an incredibly difficult task. Unlike glasses, which simply bring the world into focus, digital hearing aids strive to recreate the soundscape, amplifying useful sound and suppressing noise. As this changes by the second, sorting one from the other requires a lot of programming.

In essence, I am listening to a computer’s interpretation of the soundscape, heavily tailored to what it thinks I need to hear. I am intrigued to see how far this editorialisation of my hearing can be pushed. If I have to spend my life listening to an interpretative version of the world, what elements could I add? The data that surrounds me seems a good place to start.

Mapping digital fields isn’t a new idea. Timo Arnall’s Light Painting Wi-Fi saw the artist and his collaborators build a rod of LEDs that lit up when exposed to digital signals, and carried it through the city at night. Captured in long exposure photographs, the topographies of wireless networks appear as a ghostly blue ribbon that waxes and wanes to the strength of nearby signals, revealing the digital landscape.

“Just as the architecture of nearby buildings gives insight to their origin and purpose, we can begin to understand the social world by examining the network landscape,” says Jones. For example, by tracing the hardware address transmitted with the Wi-Fi signal, the Phantom Terrains software can trace a router’s origin. We found that residential areas were full of low-security routers whereas commercial districts had highly encrypted routers and a higher bandwidth.

Despite the information gathered, most people would balk at the idea of being forced to listen to the hum and crackle of invisible fields all day. How long I will tolerate the additional noise in my soundscape remains to be seen. But there is more to the project than a critique of digital transparency.

With the advent of the internet of things, our material world is becoming ever more draped in sensors, and it is important to think about how we might make sense of all this information. Hearing is a fantastic platform for interpreting dynamic, continuous, broad spectrum data.

Its use in this way is being aided by a revolution in hearing technology. The latest models, such as the Halo brand used in our project and ReSound’s Linx, boast a specialised low-energy Bluetooth function that can link to compatible gadgets. This has a host of immediate advantages, such as allowing people to fine-tune their hearing aids using a smartphone as an interface. More crucially, the continuous connectivity elevates hearing aids to something similar to Google Glass – an always-on, networked tool that can seamlessly stream data and audio into your world.

Already, we are talking to our computers more, using voice-activated virtual assistants such as Apple’s Siri, Microsoft’s Cortana and OK Google. Always-on headphones that talk back, whispering into our ear like discreet advisers, might well catch on ahead of Google Glass.

“The biggest challenge is human,” says Jones. “How can we create an auditory representation that is sufficiently sophisticated to express the richness and complexity of an ever-changing network infrastructure, yet unobtrusive enough to be overlaid on our normal sensory experience without being a distraction?”

Only time will tell if we have succeeded in this respect. If we have, it will be a further step towards breaking computers out of the glass-fronted box they have been trapped inside for the last 50 years.

Auditory interfaces also prompt a rethink about how we investigate data and communicate those findings, setting aside the precise and discrete nature of visual presentation in favour of complex, overlapping forms. Instead of boiling the stock market down to the movement of one index or another, for example, we could one day listen to the churning mass of numbers in real time, our ears attuned for discordant melodies.

In Harley Street, the audiologist shows me the graphical results of my tests. What should be a wide blue swathe – good hearing across all volume levels and sound frequencies – narrows sharply, permanently, at one end.

There is currently no treatment that can widen this channel, but assistive hearing technology can tweak the volume and pitch of my soundscape to pack more sound into the space available. It’s not much to work with, but I’m hoping I can inject even more into this narrow strait, to hear things in this world that nobody else can.

http://www.newscientist.com/article/mg22429952.300-the-man-who-can-hear-wifi-wherever-he-walks.html?full=true