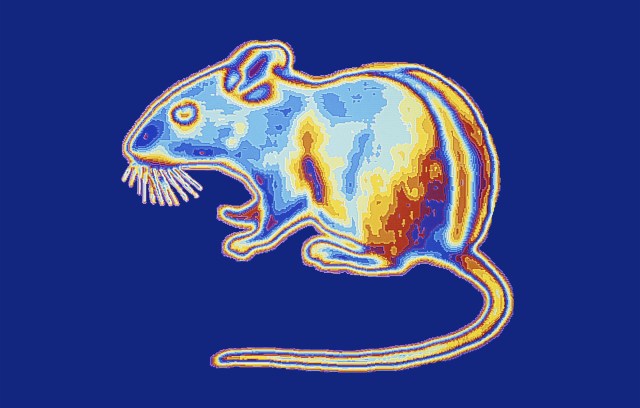

A new design for an artificial eyeball (illustrated) could someday give keen eyesight to androids, or be used as a high-tech prosthetic.

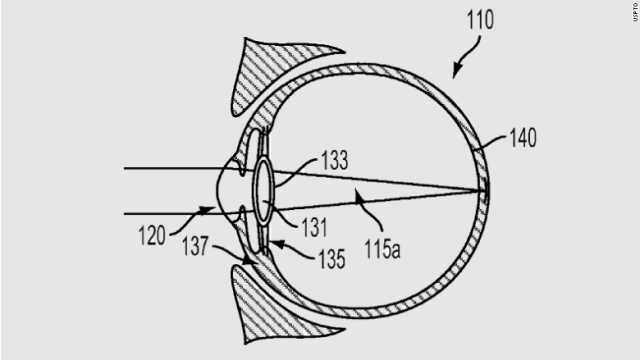

The design for a new artificial eye (illustrated) is based on the structure of the human eye. At the back of the eyeball, a synthetic retina is embedded with nanoscale light sensors. Those sensors measure light that passes through the lens at the front of the eye. Wires attached to the back of the retina ferry signals from those sensors to external circuitry for processing, similar to the way nerve fibers connect the eyeball to the brain.

By Maria Temming

Scientists can’t yet rebuild someone with bionic body parts. They don’t have the technology. But a new artificial eye brings cyborgs one step closer to reality.

This device, which mimics the human eye’s structure, is about as sensitive to light and has a faster reaction time than a real eyeball. It may not come with the telescopic or night vision capabilities that Steve Austin had in The Six Million Dollar Man television show, but this electronic eyepiece does have the potential for sharper vision than human eyes, researchers report in the May 21 Nature.

“In the future, we can use this for better vision prostheses and humanoid robotics,” says engineer and materials scientist Zhiyong Fan of the Hong Kong University of Science and Technology.

The human eye owes its wide field of view and high-resolution eyesight to the dome-shaped retina — an area at the back of the eyeball covered in light-detecting cells. Fan and colleagues used a curved aluminum oxide membrane, studded with nanosize sensors made of a light-sensitive material called a perovskite (SN: 7/26/17), to mimic that architecture in their synthetic eyeball. Wires attached to the artificial retina send readouts from those sensors to external circuitry for processing, just as nerve fibers relay signals from a real eyeball to the brain.

The artificial eyeball registers changes in lighting faster than human eyes can — within about 30 to 40 milliseconds, rather than 40 to 150 milliseconds. The device can also see dim light about as well as the human eye. Although its 100-degree field of view isn’t as broad as the 150 degrees a human eye can take in, it’s better than the 70 degrees visible to ordinary flat imaging sensors.

In theory, this synthetic eye could perceive a much higher resolution than the human eye, because the artificial retina contains about 460 million light sensors per square centimeter. A real retina has about 10 million light-detecting cells per square centimeter. But that would require separate readings from each sensor. In the current setup, each wire plugged into the synthetic retina is about one millimeter thick, so big that it touches many sensors at once. Only 100 such wires fit across the back of the retina, creating images that have 100 pixels.

To show that thinner wires could be connected to the artificial eyeball for higher resolution, Fan’s team used a magnetic field to attach a small array of metal needles, each 20 to 100 micrometers thick, to nanosensors on the synthetic retina one by one. “It’s like a surgical operation,” Fan says.

The researchers’ current method of creating individual ultrasmall pixels is impractical, says Hongrui Jiang, an electrical engineer at the University of Wisconsin–Madison whose commentary on the study appears in the same issue of Nature. “For a few hundred nanowires, okay, fine, but how about millions?” Engineers will need a much more efficient way to manufacture vast arrays of tiny wires on the back of the artificial eyeball to give it superhuman sight, he says.