By Dr. Lucy Justice

I can remember being a baby. I recall being in a vast room inside a doctor’s surgery. I was passed to a nurse and then placed in cold metal scales to be weighed. I was always aware that this memory was unusual because it was from so early in my life, but I thought that perhaps I just had a really good memory, or that perhaps other people could remember being so young, too.

What is the earliest event that you can remember? How old do you think you are in this memory? How do you experience the memory? Is it vivid or vague? Positive or negative? Are you re-experiencing the memory as it originally happened, through your own eyes, or are you watching yourself “acting” in the memory?

In our recent study, we asked more than 6,000 people of all ages to do the same, to tell us what their first autobiographical memory was, how old they were when the event happened, to rate how emotional and vivid it was and to report what perspective the memory was “seen” from. We found that on average people reported their first memory occurring during the first half of the third year of their lives (3.24 years to be precise). This matches well with other studies that have investigated the age of early memories.

What does this mean for my memory of being a baby then? Perhaps I do just have a really good memory and can remember those early months of life. Indeed, in our study, we found that around 40% of participants reported remembering events from the age of two or below – and 14% of people recalled memories from age one and below. However, psychological research suggests that memories occurring below the age of three are highly unusual – and indeed, highly improbable.

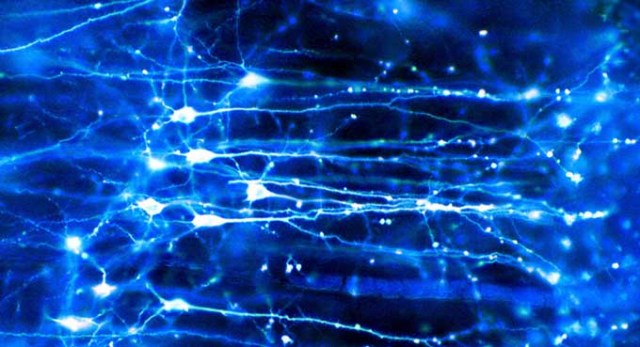

The origin of memory

Researchers who have investigated memory development suggest that the neurological processes needed to form autobiographical memories are not fully developed until between the ages of three and four years. Other research has suggested that memories are linked to language development. Language allows children to share and discuss the past with others, enabling memories to be organised in a personal autobiography.

So how can I remember being a baby? And why did 2,487 people from our study remember events that they dated from the age of two years and younger?

One explanation is that people simply gave incorrect estimates of their age in the memory. After all, unless confirmatory evidence is present, guesswork is all we have when it comes to dating memories from across our lives, including the very earliest.

But if incorrect dating explained the presence of these memories, we would expect that they would be about similar events to those memories from ages three and above. But this was not the case – we found that very early reported memories were of events and objects from infancy (pram, cot, learning to walk) whereas older memories were of things typical of childhood (toys, school, holidays). This finding meant that these two groups of memories were qualitatively different and ruled out the misdating explanation.

If research tells us that these very early memories are highly unlikely, and we have ruled out a misdating explanation, then why do people, including me, have them?

Pure fiction?

We concluded that these memories are likely to be fictional – that is, that they never in fact occurred. Perhaps, rather than recalling an experienced event, we recall imagery derived from photographs, home movies, shared family stories or events and activities that frequently happen in infancy. These facts are then, we suggest, linked with some fragmentary visual imagery and are combined together to form the basis of these fictitious early memories. Over time, this combination of imagery and fact begins to be experienced as a memory.

Although 40% of participants in our study retrieved these fictitious memories, they are not altogether surprising. Contemporary theories of memory highlight the constructive nature of memory; memories are not “records” of events, but rather psychological representations of the self in the past.

In other words, all of our memories contain some degree of fiction – indeed, this is the sign of a healthy memory system in action. But perhaps, for reasons not yet known, we have a psychological need to fictionalise memories from times of our lives that we are unable to remember. For now, these “stories” remain a mystery.

https://theconversation.com/what-is-your-first-memory-and-did-it-ever-really-happen-95953