Key takeaways:

- New data revealed that health insurance coverage, internet access and income level can influence suicide risk.

- PCPs should create a comfortable environment to address these factors and reduce suicide risk.

By addressing factors like health insurance coverage, internet access and income level, primary care providers can play an important role in suicide prevention, according to experts.

“September is Suicide Prevention Month and today is World Suicide Prevention Day, a day where we raise awareness and attention to this issue, emphasizing the message that suicide is preventable,” Debra Houry, MD, MPH, CDC’s Chief Medical Officer, said in a media briefing on Sept. 10. “Suicide rates have increased over the last 20 years and remain high: more than 49,000 people died by suicide in 2022, and provisional data indicate a similar number of people died by suicide in 2023.”

Brent Smith, MD, MSc, MLS, FAAFP, a family physician in Mississippi and member of the American Academy of Family Physicians board of directors, told Healio that PCPs “play a really underappreciated, undervalued role in all mental health care, but specifically suicide prevention.”

“Family physicians often become the de facto treatment for mental health because they’re the ones that are already established with the patient, that are available, and that have established a patient’s trust, and therefore kind of have a unique window,” he said.

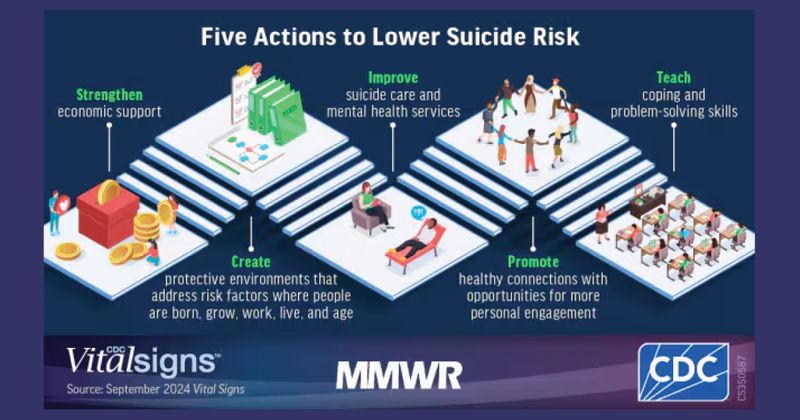

Although suicide prevention often focuses on helping patients in crisis, Houry said it is also vital to reduce factors that lead to increased suicide risk and actively address factors that promote resilience, “to keep people from ever getting to a crisis.”

In that vein, a new CDC vital signs report highlighted the importance of exploring community factors — particularly, health insurance coverage, household income levels and broadband internet access — that could be improved to help prevent suicides.

“We all likely know someone who has struggled with suicidal thoughts,” Houry said. “I lost two medical school classmates to suicide and know how this crisis can truly impact anyone and everyone.”

The new data

Alison Cammack, PhD, MPH, lead author of the new vital signs report, and colleagues found that suicide rates were lowest for counties with higher levels of household income, broadband internet access and health insurance coverage.

More specifically, when compared with the counties that had the lowest levels of these factors, suicide rates were:

- 13% lower in counties with the most household income;

- 26% lower in counties with the highest health insurance coverage; and

- 44% lower in counties where most of the homes have broadband internet access.

Cammack said there could be many reasons as to why this may be the case.

“We know that these three factors are linked with protective factors that have been shown to help reduce the risk of suicide,” Cammack, who is also health scientist of the CDC Suicide Prevention Team, said. “Health insurance coverage can help [patients] access mental health and primary care services and treatment; high-speed Internet access connects people to prevention resources, job opportunities, telehealth services and friends and family; and household financial resources such as income and economic support put in place by local state and federal governments can help families secure food, housing, health care and other basic needs.”

The report also found some groups continue to face higher suicide rates, Cammack added, including men, people in rural areas, white people and American Indian/Alaskan Native people.

“It is important to note that many barriers challenge a person’s ability to access health insurance, broadband internet and higher income,” she said. “For example, tribal and rural communities may lack the infrastructure to obtain internet access. It’s imperative that our nation works toward a comprehensive suicide prevention approach focused on programs, practices and policies designed to prevent suicide crises before they happen.”

For patients who are already stressed by these community-level factors, “it does not take much other stress to really put you in a bad place from a mental health standpoint,” Smith said.

“All of the things that we can’t control with medicine … Those social determinants play as much of a role as anything else,” he said. “And you can throw medicine [at symptoms] all you want, but we still have to treat the other things that the patients deal with.”

Importance for PCPs

Better understanding factors that influence suicide risk can improve prevention efforts and ultimately save more lives, Houry said.

“Suicide is preventable, and we know what works to stop it and to spare families and friends from losing loved ones,” she said.

PCPs must prioritize evaluating and treating these and other social factors that can impact patient health, Smith said.

“Move social determinants of health higher up in your priority list when you’re dealing with mental health, suicide and other issues,” he said. “Come to it sooner, address it quicker, and make it as much of a priority as you can in your treatment plans, in order to have a more lasting impact and more success in treating these types of things.”

That can start with creating a positive environment where patients feel safe in talking about mental health, he said.

“The biggest thing for you to do is just make the environment comfortable for people to talk about the things that are really bothering them, and then you’ll start to see some actual impact on this,” Smith said. “The problem is just making sure we’re putting it into perspective. We often undervalue how much these social stressors drive their other issues.”

Smith acknowledged that PCPs are often unable to address social determinants of health until they have tried therapy, medicine and other treatment modalities. If they do prioritize addressing these factors, “they’ll find that they’re more successful getting not only their mental health issues under control, but also their chronic medical problems,” he added.

“Our work to make patients healthy has to go beyond just a clinical room, just the exam room,” Smith said. “It’s got to go back into their communities.”

Anyone in crisis can seek confidential and free help by contacting the 988 Suicide & Crisis Lifeline by texting or calling 988 or reaching out online at 988lifeline.org.