Microscopy image of a section through one brain hemisphere of a 101 day- old ARHGAP11B-transgenic marmoset fetus. Cell nuclei are visualized by DAPI (white). Arrows indicate a sulcus and a gyrus. Credit: Heide et al. / MPI-CBG

The expansion of the human brain during evolution, specifically of the neocortex, is linked to cognitive abilities such as reasoning and language. A certain gene called ARHGAP11B that is only found in humans triggers brain stem cells to form more stem cells, a prerequisite for a bigger brain. Past studies have shown that ARHGAP11B, when expressed in mice and ferrets to unphysiologically high levels, causes an expanded neocortex, but its relevance for primate evolution has been unclear.

Researchers at the Max Planck Institute of Molecular Cell Biology and Genetics (MPI-CBG) in Dresden, together with colleagues at the Central Institute for Experimental Animals (CIEA) in Kawasaki and the Keio University in Tokyo, both located in Japan, now show that this human-specific gene, when expressed to physiological levels, causes an enlarged neocortex in the common marmoset, a New World monkey. This suggests that the ARHGAP11B gene may have caused neocortex expansion during human evolution. The researchers published their findings in the journal Science.

The human neocortex, the evolutionarily youngest part of the cerebral cortex, is about three times bigger than that of the closest human relatives, chimpanzees, and its folding into wrinkles increased during evolution to fit inside the restricted space of the skull. A key question for scientists is how the human neocortex became so big. In a 2015 study, the research group of Wieland Huttner, a founding director of the MPI-CBG, found that under the influence of the human-specific gene ARHGAP11B, mouse embryos produced many more neural progenitor cells and could even undergo folding of their normally unfolded neocortex. The results suggested that the gene ARHGAP11B plays a key role in the evolutionary expansion of the human neocortex.

The rise of the human-specific gene

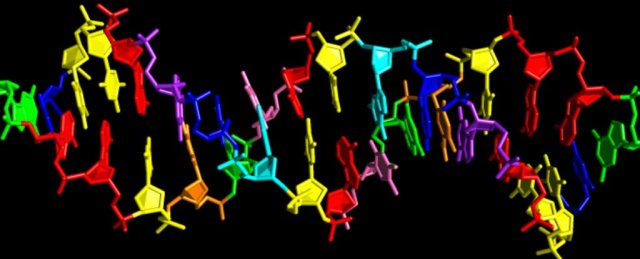

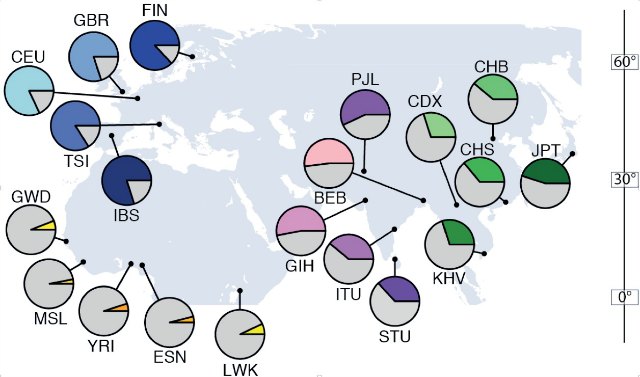

The human-specific gene ARHGAP11B arose through a partial duplication of the ubiquitous gene ARHGAP11A approximately five million years ago along the evolutionary lineage leading to Neanderthals, Denisovans, and present-day humans, and after this lineage had segregated from that leading to the chimpanzee. In a follow-up study in 2016, the research group of Wieland Huttner uncovered a surprising reason why the ARHGAP11B protein contains a sequence of 47 amino acids that is human-specific, not found in the ARHGAP11A protein, and essential for ARHGAP11B’s ability to increase brain stem cells.

Specifically, a single C-to-G base substitution found in the ARHGAP11B gene leads to the loss of 55 nucleotides from the ARHGAP11B messenger RNA, which causes a shift in the reading frame resulting in the human-specific, functionally critical 47 amino acid sequence. This base substitution probably happened much later than when this gene arose about 5 million years ago, anytime between 1.5 million and 500,000 years ago. Such point mutations are not rare, but in the case of ARHGAP11B its advantages of forming a bigger brain seem to have immediately influenced human evolution.

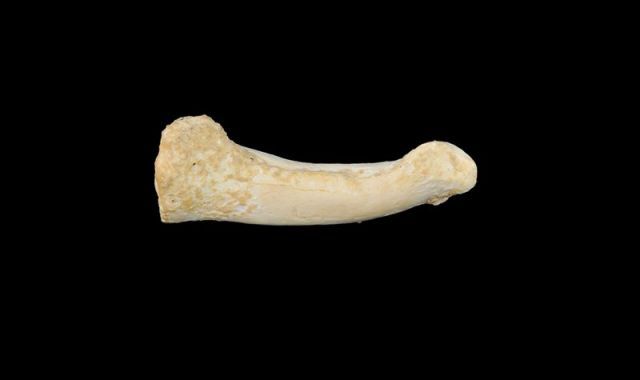

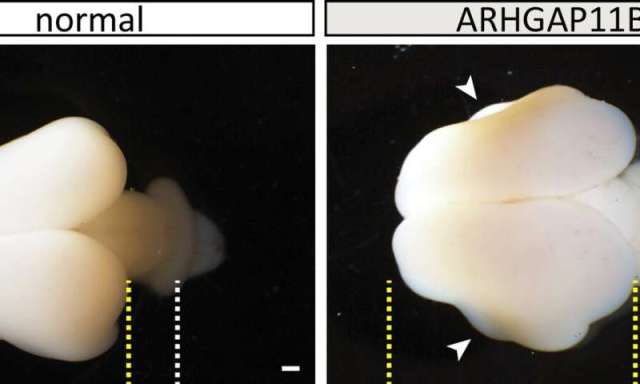

Wildtype (normal) and ARHGAP11B-transgenic fetal (101 days) marmoset brains. Yellow lines, boundaries of cerebral cortex; white lines, developing cerebellum; arrowheads, folds. Scale bars, 1 mm. Credit: Heide et al. / MPI-CBG

The gene’s effect in monkeys

However, it has been unclear until now if the human-specific gene ARHGAP11B would also cause an enlarged neocortex in non-human primates. To investigate this, the researchers in the group of Wieland Huttner teamed up with Erika Sasaki at the Central Institute for Experimental Animals (CIEA) in Kawasaki and Hideyuki Okano at the Keio University in Tokyo, both located in Japan, who had pioneered the development of a technology to generate transgenic non-human primates. The first author of the study, postdoc Michael Heide, traveled to Japan to work with the colleagues directly on-site.

They generated transgenic common marmosets, a New World monkey, that expressed the human-specific gene ARHGAP11B, which they normally do not have, in the developing neocortex. Japan has similarly high ethical standards and regulations regarding animal research and animal welfare as Germany does. The brains of 101-day-old common marmoset fetuses (50 days before the normal birth date) were obtained in Japan and exported to the MPI-CBG in Dresden for detailed analysis.

Michael Heide explains: “We found indeed that the neocortex of the common marmoset brain was enlarged and the brain surface folded. Its cortical plate was also thicker than normal. Furthermore, we could see increased numbers of basal radial glia progenitors in the outer subventricular zone and increased numbers of upper-layer neurons, the neuron type that increases in primate evolution.” The researchers had now functional evidence that ARHGAP11B causes an expansion of the primate neocortex.

Ethical consideration

Wieland Huttner, who led the study, adds: “We confined our analyses to marmoset fetuses, because we anticipated that the expression of this human-specific gene would affect the neocortex development in the marmoset. In light of potential unforeseeable consequences with regard to postnatal brain function, we considered it a prerequisite—and mandatory from an ethical point of view—to first determine the effects of ARHGAP11B on the development of fetal marmoset neocortex.”

The researchers conclude that these results suggest that the human-specific ARHGAP11B gene may have caused neocortex expansion in the course of human evolution.

More information: “Human-specific ARHGAP11B increases size and folding of primate neocortex in the fetal marmoset” Science (2020). science.sciencemag.org/cgi/doi … 1126/science.abb2401

https://medicalxpress.com/news/2020-06-human-brain-size-gene-triggers.html