Imagine a reality where computers can visualize what you are thinking.

Sound far out? It’s now closer to becoming a reality thanks to four scientists at Kyoto University in Kyoto, Japan. In late December, Guohua Shen, Tomoyasu Horikawa, Kei Majima and Yukiyasu Kamitani released the results of their recent research on using artificial intelligence to decode thoughts on the scientific platform, BioRxiv.

Click to access 240317.full.pdf

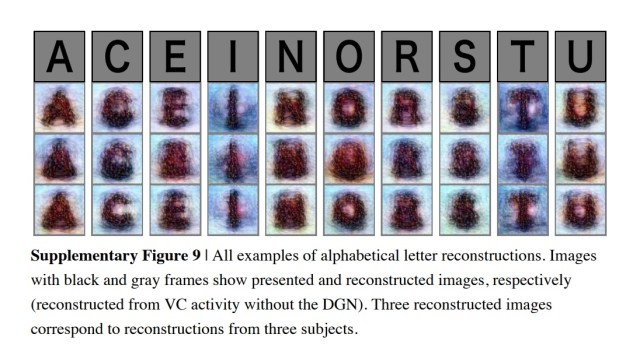

Machine learning has previously been used to study brain scans (MRIs, or magnetic resonance imaging) and generate visualizations of what a person is thinking when referring to simple, binary images like black and white letters or simple geographic shapes.

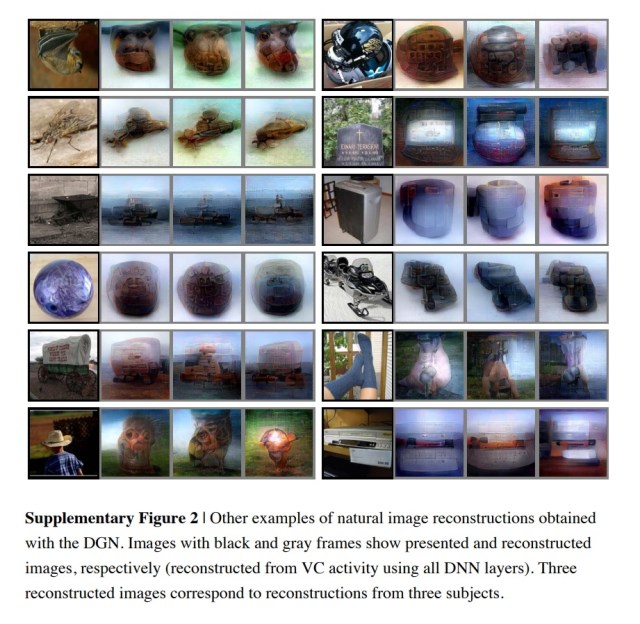

But the scientists from Kyoto developed new techniques of “decoding” thoughts using deep neural networks (artificial intelligence). The new technique allows the scientists to decode more sophisticated “hierarchical” images, which have multiple layers of color and structure, like a picture of a bird or a man wearing a cowboy hat, for example.

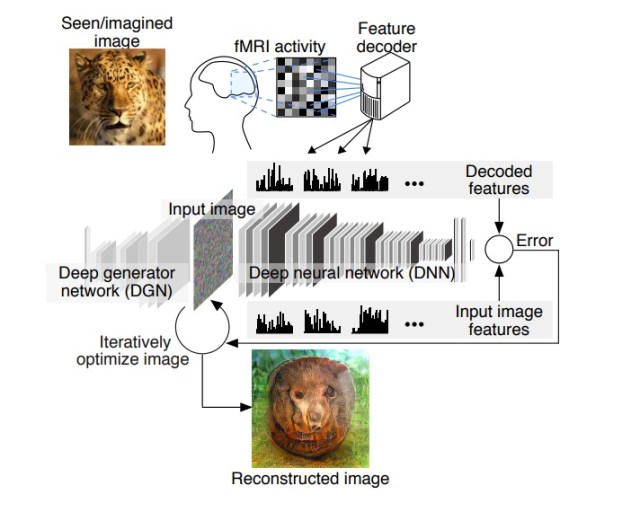

“We have been studying methods to reconstruct or recreate an image a person is seeing just by looking at the person’s brain activity,” Kamitani, one of the scientists, tells CNBC Make It. “Our previous method was to assume that an image consists of pixels or simple shapes. But it’s known that our brain processes visual information hierarchically extracting different levels of features or components of different complexities.”

And the new AI research allows computers to detect objects, not just binary pixels. “These neural networks or AI model can be used as a proxy for the hierarchical structure of the human brain,” Kamitani says.

For the research, over the course of 10 months, three subjects were shown natural images (like photographs of a bird or a person), artificial geometric shapes and alphabetical letters for varying lengths of time.

In some instances, brain activity was measured while a subject was looking at one of 25 images. In other cases, it was logged afterward, when subjects were asked to think of the image they were previously shown.

Once the brain activity was scanned, a computer reverse-engineered (or “decoded”) the information to generate visualizations of a subjects’ thoughts.

The flowchart, embedded below, is made by the research team at the Kamitani Lab at Kyoto University and breaks down the science of how a visualization is “decoded.”

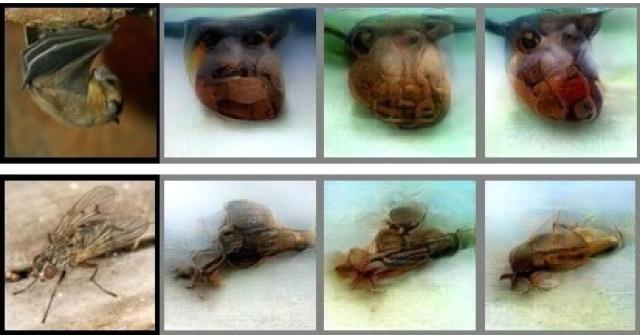

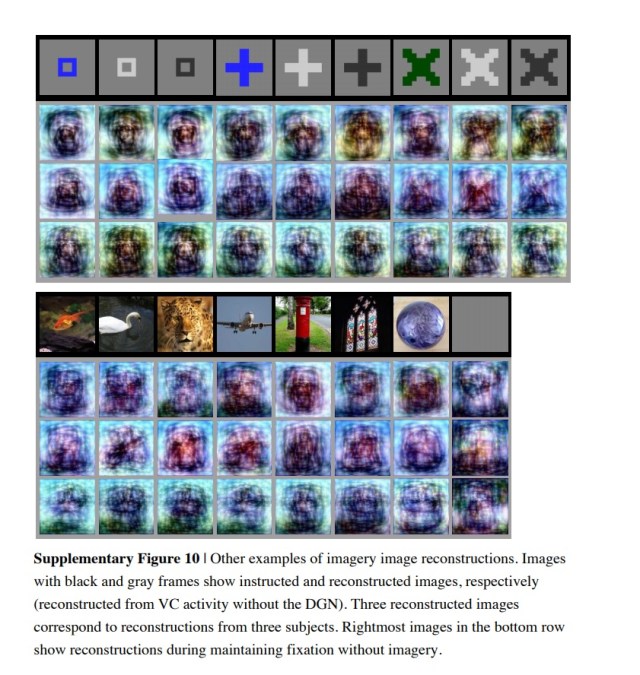

The two charts embedded below show the results the computer reconstructed for subjects whose activity was logged while they were looking at natural images and images of letters.

As for the subjects’ whose brain waves were measured based on remembering the images, the scientists had another breakthrough.

“Unlike previous methods, we were able to reconstruct visual imagery a person produced by just thinking of some remembered images,” Kamitani says.

As seen in the chart embedded below, when decoding brain signals resulting from a subject remembering images, the AI system had a harder time reconstructing. That’s because it’s more difficult for a human to remember an image of a cheetah or a fish exactly as it was seen.

“The brain is less activated” in that scenario, Kamitani explains to CNBC Make It.

As the accuracy of the technology continues to improve, the potential applications are mind-boggling. The visualization technology would allow you to draw pictures or make art simply by imagining something; your dreams could be visualized by a computer; the hallucinations of psychiatric patients could be visualized aiding in their care; and brain-machine interfaces may one day allow communication with imagery or thoughts, Kamitani tells CNBC Make It.

While the idea of computers reading your brain may sound positively Jetson-esque, the Japanese researchers aren’t alone in their futuristic work to connect the brain with computing power.

For example, former GoogleX-er Mary Lou Jepsen is working to build a hat that will make telepathy possible within the decade, and entrepreneur Bryan Johnson is working to build computer chips to implant in the brain to improve neurological functions.