by George Dvorsky

Using brain-scanning technology, artificial intelligence, and speech synthesizers, scientists have converted brain patterns into intelligible verbal speech—an advance that could eventually give voice to those without.

It’s a shame Stephen Hawking isn’t alive to see this, as he may have gotten a real kick out of it. The new speech system, developed by researchers at the Neural Acoustic Processing Lab at Columbia University in New York City, is something the late physicist might have benefited from.

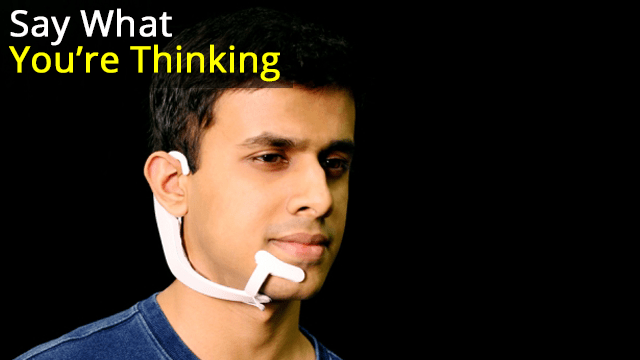

Hawking had amyotrophic lateral sclerosis (ALS), a motor neuron disease that took away his verbal speech, but he continued to communicate using a computer and a speech synthesizer. By using a cheek switch affixed to his glasses, Hawking was able to pre-select words on a computer, which were read out by a voice synthesizer. It was a bit tedious, but it allowed Hawking to produce around a dozen words per minute.

But imagine if Hawking didn’t have to manually select and trigger the words. Indeed, some individuals, whether they have ALS, locked-in syndrome, or are recovering from a stroke, may not have the motor skills required to control a computer, even by just a tweak of the cheek. Ideally, an artificial voice system would capture an individual’s thoughts directly to produce speech, eliminating the need to control a computer.

New research published today in Scientific Advances takes us an important step closer to that goal, but instead of capturing an individual’s internal thoughts to reconstruct speech, it uses the brain patterns produced while listening to speech.

To devise such a speech neuroprosthesis, neuroscientist Nima Mesgarani and his colleagues combined recent advances in deep learning with speech synthesis technologies. Their resulting brain-computer interface, though still rudimentary, captured brain patterns directly from the auditory cortex, which were then decoded by an AI-powered vocoder, or speech synthesizer, to produce intelligible speech. The speech was very robotic sounding, but nearly three in four listeners were able to discern the content. It’s an exciting advance—one that could eventually help people who have lost the capacity for speech.

To be clear, Mesgarani’s neuroprosthetic device isn’t translating an individual’s covert speech—that is, the thoughts in our heads, also called imagined speech—directly into words. Unfortunately, we’re not quite there yet in terms of the science. Instead, the system captured an individual’s distinctive cognitive responses as they listened to recordings of people speaking. A deep neural network was then able to decode, or translate, these patterns, allowing the system to reconstruct speech.

“This study continues a recent trend in applying deep learning techniques to decode neural signals,” Andrew Jackson, a professor of neural interfaces at Newcastle University who wasn’t involved in the new study, told Gizmodo. “In this case, the neural signals are recorded from the brain surface of humans during epilepsy surgery. The participants listen to different words and sentences which are read by actors. Neural networks are trained to learn the relationship between brain signals and sounds, and as a result can then reconstruct intelligible reproductions of the words/sentences based only on the brain signals.”

Epilepsy patients were chosen for the study because they often have to undergo brain surgery. Mesgarani, with the help of Ashesh Dinesh Mehta, a neurosurgeon at Northwell Health Physician Partners Neuroscience Institute and a co-author of the new study, recruited five volunteers for the experiment. The team used invasive electrocorticography (ECoG) to measure neural activity as the patients listened to continuous speech sounds. The patients listened, for example, to speakers reciting digits from zero to nine. Their brain patterns were then fed into the AI-enabled vocoder, resulting in the synthesized speech.

The results were very robotic-sounding, but fairly intelligible. In tests, listeners could correctly identify spoken digits around 75 percent of the time. They could even tell if the speaker was male or female. Not bad, and a result that even came as “a surprise” to Mesgaran, as he told Gizmodo in an email.

Recordings of the speech synthesizer can be found here (the researchers tested various techniques, but the best result came from the combination of deep neural networks with the vocoder).

The use of a voice synthesizer in this context, as opposed to a system that can match and recite pre-recorded words, was important to Mesgarani. As he explained to Gizmodo, there’s more to speech than just putting the right words together.

“Since the goal of this work is to restore speech communication in those who have lost the ability to talk, we aimed to learn the direct mapping from the brain signal to the speech sound itself,” he told Gizmodo. “It is possible to also decode phonemes [distinct units of sound] or words, however, speech has a lot more information than just the content—such as the speaker [with their distinct voice and style], intonation, emotional tone, and so on. Therefore, our goal in this particular paper has been to recover the sound itself.”

Looking ahead, Mesgarani would like to synthesize more complicated words and sentences, and collect brain signals of people who are simply thinking or imagining the act of speaking.

Jackson was impressed with the new study, but he said it’s still not clear if this approach will apply directly to brain-computer interfaces.

“In the paper, the decoded signals reflect actual words heard by the brain. To be useful, a communication device would have to decode words that are imagined by the user,” Jackson told Gizmodo. “Although there is often some overlap between brain areas involved in hearing, speaking, and imagining speech, we don’t yet know exactly how similar the associated brain signals will be.”

William Tatum, a neurologist at the Mayo Clinic who was also not involved in the new study, said the research is important in that it’s the first to use artificial intelligence to reconstruct speech from the brain waves involved in generating known acoustic stimuli. The significance is notable, “because it advances application of deep learning in the next generation of better designed speech-producing systems,” he told Gizmodo. That said, he felt the sample size of participants was too small, and that the use of data extracted directly from the human brain during surgery is not ideal.

Another limitation of the study is that the neural networks, in order for them do more than just reproduce words from zero to nine, would have to be trained on a large number of brain signals from each participant. The system is patient-specific, as we all produce different brain patterns when we listen to speech.

“It will be interesting in future to see how well decoders trained for one person generalize to other individuals,” said Jackson. “It’s a bit like early speech recognition systems that needed to be individually trained by the user, as opposed to today’s technology, such as Siri and Alexa, that can make sense of anyone’s voice, again using neural networks. Only time will tell whether these technologies could one day do the same for brain signals.”

No doubt, there’s still lots of work to do. But the new paper is an encouraging step toward the achievement of implantable speech neuroprosthetics.

https://gizmodo.com/neuroscientists-translate-brain-waves-into-recognizable-1832155006