by John H. Richardson

by John H. Richardson

In an ordinary hospital room in Los Angeles, a young woman named Lauren Dickerson waits for her chance to make history.

She’s 25 years old, a teacher’s assistant in a middle school, with warm eyes and computer cables emerging like futuristic dreadlocks from the bandages wrapped around her head. Three days earlier, a neurosurgeon drilled 11 holes through her skull, slid 11 wires the size of spaghetti into her brain, and connected the wires to a bank of computers. Now she’s caged in by bed rails, with plastic tubes snaking up her arm and medical monitors tracking her vital signs. She tries not to move.

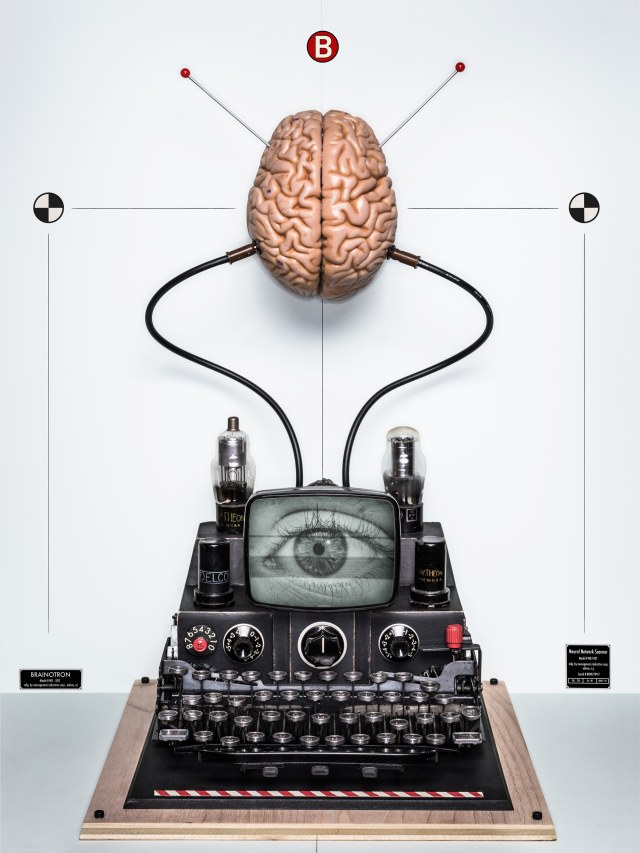

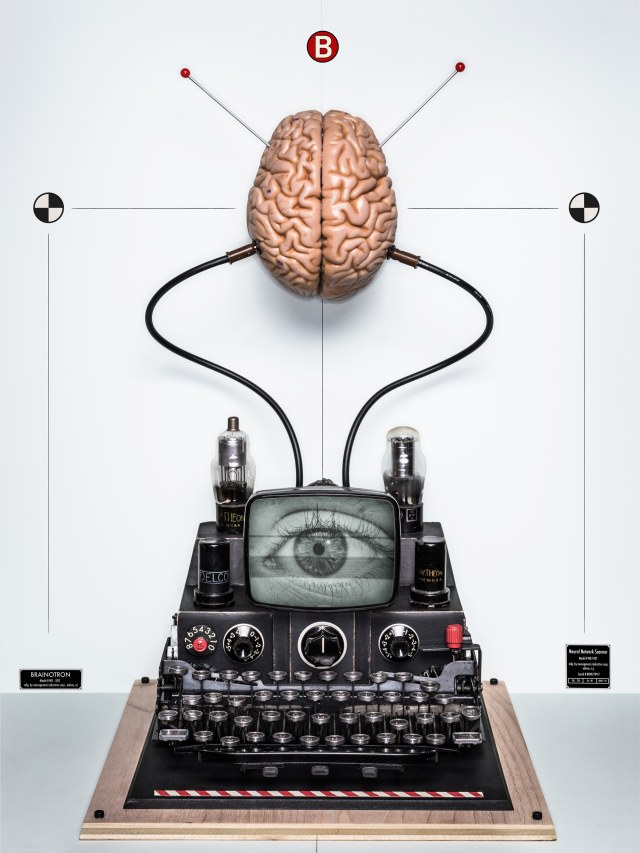

The room is packed. As a film crew prepares to document the day’s events, two separate teams of specialists get ready to work—medical experts from an elite neuroscience center at the University of Southern California and scientists from a technology company called Kernel. The medical team is looking for a way to treat Dickerson’s seizures, which an elaborate regimen of epilepsy drugs controlled well enough until last year, when their effects began to dull. They’re going to use the wires to search Dickerson’s brain for the source of her seizures. The scientists from Kernel are there for a different reason: They work for Bryan Johnson, a 40-year-old tech entrepreneur who sold his business for $800 million and decided to pursue an insanely ambitious dream—he wants to take control of evolution and create a better human. He intends to do this by building a “neuroprosthesis,” a device that will allow us to learn faster, remember more, “coevolve” with artificial intelligence, unlock the secrets of telepathy, and maybe even connect into group minds. He’d also like to find a way to download skills such as martial arts, Matrix-style. And he wants to sell this invention at mass-market prices so it’s not an elite product for the rich.

Right now all he has is an algorithm on a hard drive. When he describes the neuroprosthesis to reporters and conference audiences, he often uses the media-friendly expression “a chip in the brain,” but he knows he’ll never sell a mass-market product that depends on drilling holes in people’s skulls. Instead, the algorithm will eventually connect to the brain through some variation of noninvasive interfaces being developed by scientists around the world, from tiny sensors that could be injected into the brain to genetically engineered neurons that can exchange data wirelessly with a hatlike receiver. All of these proposed interfaces are either pipe dreams or years in the future, so in the meantime he’s using the wires attached to Dickerson’s hippocampus to focus on an even bigger challenge: what you say to the brain once you’re connected to it.

That’s what the algorithm does. The wires embedded in Dickerson’s head will record the electrical signals that Dickerson’s neurons send to one another during a series of simple memory tests. The signals will then be uploaded onto a hard drive, where the algorithm will translate them into a digital code that can be analyzed and enhanced—or rewritten—with the goal of improving her memory. The algorithm will then translate the code back into electrical signals to be sent up into the brain. If it helps her spark a few images from the memories she was having when the data was gathered, the researchers will know the algorithm is working. Then they’ll try to do the same thing with memories that take place over a period of time, something nobody’s ever done before. If those two tests work, they’ll be on their way to deciphering the patterns and processes that create memories.

Although other scientists are using similar techniques on simpler problems, Johnson is the only person trying to make a commercial neurological product that would enhance memory. In a few minutes, he’s going to conduct his first human test. For a commercial memory prosthesis, it will be the first human test. “It’s a historic day,” Johnson says. “I’m insanely excited about it.”

For the record, just in case this improbable experiment actually works, the date is January 30, 2017.

At this point, you may be wondering if Johnson’s just another fool with too much money and an impossible dream. I wondered the same thing the first time I met him. He seemed like any other California dude, dressed in the usual jeans, sneakers, and T-shirt, full of the usual boyish enthusiasms. His wild pronouncements about “reprogramming the operating system of the world” seemed downright goofy.

But you soon realize this casual style is either camouflage or wishful thinking. Like many successful people, some brilliant and some barely in touch with reality, Johnson has endless energy and the distributed intelligence of an octopus—one tentacle reaches for the phone, another for his laptop, a third scouts for the best escape route. When he starts talking about his neuroprosthesis, they team up and squeeze till you turn blue.

And there is that $800 million that PayPal shelled out for Braintree, the online-payment company Johnson started when he was 29 and sold when he was 36. And the $100 million he is investing into Kernel, the company he started to pursue this project. And the decades of animal tests to back up his sci-fi ambitions: Researchers have learned how to restore memories lost to brain damage, plant false memories, control the motions of animals through human thought, control appetite and aggression, induce sensations of pleasure and pain, even how to beam brain signals from one animal to another animal thousands of miles away.

And Johnson isn’t dreaming this dream alone—at this moment, Elon Musk and Mark Zuckerberg are weeks from announcing their own brain-hacking projects, the military research group known as Darpa already has 10 under way, and there’s no doubt that China and other countries are pursuing their own. But unlike Johnson, they’re not inviting reporters into any hospital rooms.

Here’s the gist of every public statement Musk has made about his project: (1) He wants to connect our brains to computers with a mysterious device called “neural lace.” (2) The name of the company he started to build it is Neuralink.

Thanks to a presentation at last spring’s F8 conference, we know a little more about what Zuckerberg is doing at Facebook: (1) The project was until recently overseen by Regina Dugan, a former director of Darpa and Google’s Advanced Technology group. (2) The team is working out of Building 8, Zuckerberg’s research lab for moon-shot projects. (3) They’re working on a noninvasive “brain–computer speech-to-text interface” that uses “optical imaging” to read the signals of neurons as they form words, find a way to translate those signals into code, and then send the code to a computer. (4) If it works, we’ll be able to “type” 100 words a minute just by thinking.

As for Darpa, we know that some of its projects are improvements on existing technology and some—such as an interface to make soldiers learn faster—sound just as futuristic as Johnson’s. But we don’t know much more than that. That leaves Johnson as our only guide, a job he says he’s taken on because he thinks the world needs to be prepared for what is coming.

All of these ambitious plans face the same obstacle, however: The brain has 86 billion neurons, and nobody understands how they all work. Scientists have made impressive progress uncovering, and even manipulating, the neural circuitry behind simple brain functions, but things such as imagination or creativity—and memory—are so complex that all the neuroscientists in the world may never solve them. That’s why a request for expert opinions on the viability of Johnson’s plans got this response from John Donoghue, the director of the Wyss Center for Bio and Neuroengineering in Geneva: “I’m cautious,” he said. “It’s as if I asked you to translate something from Swahili to Finnish. You’d be trying to go from one unknown language into another unknown language.” To make the challenge even more daunting, he added, all the tools used in brain research are as primitive as “a string between two paper cups.” So Johnson has no idea if 100 neurons or 100,000 or 10 billion control complex brain functions. On how most neurons work and what kind of codes they use to communicate, he’s closer to “Da-da” than “see Spot run.” And years or decades will pass before those mysteries are solved, if ever. To top it all off, he has no scientific background. Which puts his foot on the banana peel of a very old neuroscience joke: “If the brain was simple enough for us to understand, we’d be too stupid to understand it.”

I don’t need telepathy to know what you’re thinking now—there’s nothing more annoying than the big dreams of tech optimists. Their schemes for eternal life and floating libertarian nations are adolescent fantasies; their digital revolution seems to be destroying more jobs than it created, and the fruits of their scientific fathers aren’t exactly encouraging either. “Coming soon, from the people who brought you nuclear weapons!”

But Johnson’s motives go to a deep and surprisingly tender place. Born into a devout Mormon community in Utah, he learned an elaborate set of rules that are still so vivid in his mind that he brought them up in the first minutes of our first meeting: “If you get baptized at the age of 8, point. If you get into the priesthood at the age of 12, point. If you avoid pornography, point. Avoid masturbation? Point. Go to church every Sunday? Point.” The reward for a high point score was heaven, where a dutiful Mormon would be reunited with his loved ones and gifted with endless creativity.

When he was 4, Johnson’s father left the church and divorced his mother. Johnson skips over the painful details, but his father told me his loss of faith led to a long stretch of drug and alcohol abuse, and his mother said she was so broke that she had to send Johnson to school in handmade clothes. His father remembers the letters Johnson started sending him when he was 11, a new one every week: “Always saying 100 different ways, ‘I love you, I need you.’ How he knew as a kid the one thing you don’t do with an addict or an alcoholic is tell them what a dirtbag they are, I’ll never know.”

Johnson was still a dutiful believer when he graduated from high school and went to Ecuador on his mission, the traditional Mormon rite of passage. He prayed constantly and gave hundreds of speeches about Joseph Smith, but he became more and more ashamed about trying to convert sick and hungry children with promises of a better life in heaven. Wouldn’t it be better to ease their suffering here on earth?

“Bryan came back a changed boy,” his father says.

Soon he had a new mission, self-assigned. His sister remembers his exact words: “He said he wanted to be a millionaire by the time he was 30 so he could use those resources to change the world.”

His first move was picking up a degree at Brigham Young University, selling cell phones to help pay the tuition and inhaling every book that seemed to promise a way forward. One that left a lasting impression was Endurance, the story of Ernest Shackleton’s botched journey to the South Pole—if sheer grit could get a man past so many hardships, he would put his faith in sheer grit. He married “a nice Mormon girl,” fathered three Mormon children, and took a job as a door-to-door salesman to support them. He won a prize for Salesman of the Year and started a series of businesses that went broke—which convinced him to get a business degree at the University of Chicago.

When he graduated in 2008, he stayed in Chicago and started Braintree, perfecting his image as a world-beating Mormon entrepreneur. By that time, his father was sober and openly sharing his struggles, and Johnson was the one hiding his dying faith behind a very well-protected wall. He couldn’t sleep, ate like a wolf, and suffered intense headaches, fighting back with a long series of futile cures: antidepressants, biofeedback, an energy healer, even blind obedience to the rules of his church.

In 2012, at the age of 35, Johnson hit bottom. In his misery, he remembered Shackleton and seized a final hope—maybe he could find an answer by putting himself through a painful ordeal. He planned a trip to Mount Kilimanjaro, and on the second day of the climb he got a stomach virus. On the third day he got altitude sickness. When he finally made it to the peak, he collapsed in tears and then had to be carried down on a stretcher. It was time to reprogram his operating system.

The way Johnson tells it, he started by dropping the world-beater pose that hid his weakness and doubt. And although this may all sound a bit like a dramatic motivational talk at a TED conference, especially since Johnson still projects the image of a world-beating entrepreneur, this much is certain: During the following 18 months, he divorced his wife, sold Braintree, and severed his last ties to the church. To cushion the impact on his children, he bought a house nearby and visited them almost daily. He knew he was repeating his father’s mistakes but saw no other option—he was either going to die inside or start living the life he always wanted.

He started with the pledge he made when he came back from Ecuador, experimenting first with a good-government initiative in Washington and pivoting, after its inevitable doom, to a venture fund for “quantum leap” companies inventing futuristic products such as human-organ-mimicking silicon chips. But even if all his quantum leaps landed, they wouldn’t change the operating system of the world.

Finally, the Big Idea hit: If the root problems of humanity begin in the human mind, let’s change our minds.

Fantastic things were happening in neuroscience. Some of them sounded just like miracles from the Bible—with prosthetic legs controlled by thought and microchips connected to the visual cortex, scientists were learning to help the lame walk and the blind see. At the University of Toronto, a neurosurgeon named Andres Lozano slowed, and in some cases reversed, the cognitive declines of Alzheimer’s patients using deep brain stimulation. At a hospital in upstate New York, a neurotechnologist named Gerwin Schalk asked computer engineers to record the firing patterns of the auditory neurons of people listening to Pink Floyd. When the engineers turned those patterns back into sound waves, they produced a single that sounded almost exactly like “Another Brick in the Wall.” At the University of Washington, two professors in different buildings played a videogame together with the help of electroencephalography caps that fired off electrical pulses—when one professor thought about firing digital bullets, the other one felt an impulse to push the Fire button.

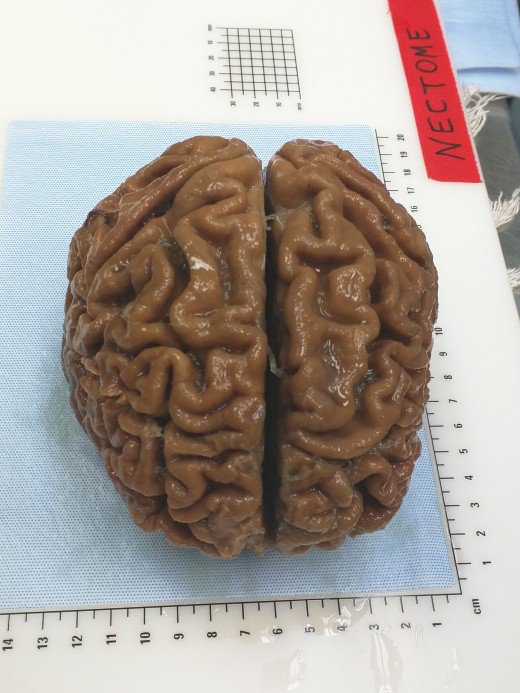

Johnson also heard about a biomedical engineer named Theodore Berger. During nearly 20 years of research, Berger and his collaborators at USC and Wake Forest University developed a neuroprosthesis to improve memory in rats. It didn’t look like much when he started testing it in 2002—just a slice of rat brain and a computer chip. But the chip held an algorithm that could translate the firing patterns of neurons into a kind of Morse code that corresponded with actual memories. Nobody had ever done that before, and some people found the very idea offensive—it’s so deflating to think of our most precious thoughts reduced to ones and zeros. Prominent medical ethicists accused Berger of tampering with the essence of identity. But the implications were huge: If Berger could turn the language of the brain into code, perhaps he could figure out how to fix the part of the code associated with neurological diseases.

In rats, as in humans, firing patterns in the hippocampus generate a signal or code that, somehow, the brain recognizes as a long-term memory. Berger trained a group of rats to perform a task and studied the codes that formed. He learned that rats remembered a task better when their neurons sent “strong code,” a term he explains by comparing it to a radio signal: At low volume you don’t hear all of the words, but at high volume everything comes through clear. He then studied the difference in the codes generated by the rats when they remembered to do something correctly and when they forgot. In 2011, through a breakthrough experiment conducted on rats trained to push a lever, he demonstrated he could record the initial memory codes, feed them into an algorithm, and then send stronger codes back into the rats’ brains. When he finished, the rats that had forgotten how to push the lever suddenly remembered.

Five years later, Berger was still looking for the support he needed for human trials. That’s when Johnson showed up. In August 2016, he announced he would pledge $100 million of his fortune to create Kernel and that Berger would join the company as chief science officer. After learning about USC’s plans to implant wires in Dickerson’s brain to battle her epilepsy, Johnson approached Charles Liu, the head of the prestigious neurorestoration division at the USC School of Medicine and the lead doctor on Dickerson’s trial. Johnson asked him for permission to test the algorithm on Dickerson while she had Liu’s wires in her hippocampus—in between Liu’s own work sessions, of course. As it happened, Liu had dreamed about expanding human powers with technology ever since he got obsessed with The Six Million Dollar Man as a kid. He helped Johnson get Dickerson’s consent and convinced USC’s institutional research board to approve the experiment. At the end of 2016, Johnson got the green light. He was ready to start his first human trial.

In the hospital room, Dickerson is waiting for the experiments to begin, and I ask her how she feels about being a human lab rat.

“If I’m going to be here,” she says, “I might as well do something useful.”

Useful? This starry-eyed dream of cyborg supermen? “You know he’s trying to make humans smarter, right?”

“Isn’t that cool?” she answers.

Over by the computers, I ask one of the scientists about the multicolored grid on the screen. “Each one of these squares is an electrode that’s in her brain,” one says. Every time a neuron close to one of the wires in Dickerson’s brain fires, he explains, a pink line will jump in the relevant box.

Johnson’s team is going to start with simple memory tests. “You’re going to be shown words,” the scientist explains to her. “Then there will be some math problems to make sure you’re not rehearsing the words in your mind. Try to remember as many words as you can.”

One of the scientists hands Dickerson a computer tablet, and everyone goes quiet. Dickerson stares at the screen to take in the words. A few minutes later, after the math problem scrambles her mind, she tries to remember what she’d read. “Smoke … egg … mud … pearl.”

Next, they try something much harder, a group of memories in a sequence. As one of Kernel’s scientists explains to me, they can only gather so much data from wires connected to 30 or 40 neurons. A single face shouldn’t be too hard, but getting enough data to reproduce memories that stretch out like a scene in a movie is probably impossible.

Sitting by the side of Dickerson’s bed, a Kernel scientist takes on the challenge. “Could you tell me the last time you went to a restaurant?”

“It was probably five or six days ago,” Dickerson says. “I went to a Mexican restaurant in Mission Hills. We had a bunch of chips and salsa.”

He presses for more. As she dredges up other memories, another Kernel scientist hands me a pair of headphones connected to the computer bank. All I hear at first is a hissing sound. After 20 or 30 seconds go by I hear a pop.

“That’s a neuron firing,” he says.

As Dickerson continues, I listen to the mysterious language of the brain, the little pops that move our legs and trigger our dreams. She remembers a trip to Costco and the last time it rained, and I hear the sounds of Costco and rain.

When Dickerson’s eyelids start sinking, the medical team says she’s had enough and Johnson’s people start packing up. Over the next few days, their algorithm will turn Dickerson’s synaptic activity into code. If the codes they send back into Dickerson’s brain make her think of dipping a few chips in salsa, Johnson might be one step closer to reprogramming the operating system of the world.

But look, there’s another banana peel—after two days of frantic coding, Johnson’s team returns to the hospital to send the new code into Dickerson’s brain. Just when he gets word that they can get an early start, a message arrives: It’s over. The experiment has been placed on “administrative hold.” The only reason USC would give in the aftermath was an issue between Johnson and Berger. Berger would later tell me he had no idea the experiment was under way and that Johnson rushed into it without his permission. Johnson said he is mystified by Berger’s accusations. “I don’t know how he could not have known about it. We were working with his whole lab, with his whole team.” The one thing they both agree on is that their relationship fell apart shortly afterward, with Berger leaving the company and taking his algorithm with him. He blames the break entirely on Johnson. “Like most investors, he wanted a high rate of return as soon as possible. He didn’t realize he’d have to wait seven or eight years to get FDA approval—I would have thought he would have looked that up.” But Johnson didn’t want to slow down. He had bigger plans, and he was in a hurry.

Eight months later, I go back to California to see where Johnson has ended up. He seems a little more relaxed. On the whiteboard behind his desk at Kernel’s new offices in Los Angeles, someone’s scrawled a playlist of songs in big letters. “That was my son,” he says. “He interned here this summer.” Johnson is a year into a romance with Taryn Southern, a charismatic 31-year-old performer and film producer. And since his break with Berger, Johnson has tripled Kernel’s staff—he’s up to 36 employees now—adding experts in fields like chip design and computational neuroscience. His new science adviser is Ed Boyden, the director of MIT’s Synthetic Neurobiology Group and a superstar in the neuroscience world. Down in the basement of the new office building, there’s a Dr. Frankenstein lab where scientists build prototypes and try them out on glass heads.

When the moment seems right, I bring up the purpose of my visit. “You said you had something to show me?”

Johnson hesitates. I’ve already promised not to reveal certain sensitive details, but now I have to promise again. Then he hands me two small plastic display cases. Inside, two pairs of delicate twisty wires rest on beds of foam rubber. They look scientific but also weirdly biological, like the antennae of some futuristic bug-bot.

I’m looking at the prototypes for Johnson’s brand-new neuromodulator. On one level, it’s just a much smaller version of the deep brain stimulators and other neuromodulators currently on the market. But unlike a typical stimulator, which just fires pulses of electricity, Johnson’s is designed to read the signals that neurons send to other neurons—and not just the 100 neurons the best of the current tools can harvest, but perhaps many more. That would be a huge advance in itself, but the implications are even bigger: With Johnson’s neuromodulator, scientists could collect brain data from thousands of patients, with the goal of writing precise codes to treat a variety of neurological diseases.

In the short term, Johnson hopes his neuromodulator will help him “optimize the gold rush” in neurotechnology—financial analysts are forecasting a $27 billion market for neural devices within six years, and countries around the world are committing billions to the escalating race to decode the brain. In the long term, Johnson believes his signal-reading neuromodulator will advance his bigger plans in two ways: (1) by giving neuroscientists a vast new trove of data they can use to decode the workings of the brain and (2) by generating the huge profits Kernel needs to launch a steady stream of innovative and profitable neural tools, keeping the company both solvent and plugged into every new neuroscience breakthrough. With those two achievements in place, Johnson can watch and wait until neuroscience reaches the level of sophistication he needs to jump-start human evolution with a mind-enhancing neuroprosthesis.

Liu, the neurologist with the Six Million Dollar Man dreams, compares Johnson’s ambition to flying. “Going back to Icarus, human beings have always wanted to fly. We don’t grow wings, so we build a plane. And very often these solutions will have even greater capabilities than the ones nature created—no bird ever flew to Mars.” But now that humanity is learning how to reengineer its own capabilities, we really can choose how we evolve. “We have to wrap our minds around that. It’s the most revolutionary thing in the world.”

The crucial ingredient is the profit motive, which always drives rapid innovation in science. That’s why Liu thinks Johnson could be the one to give us wings. “I’ve never met anyone with his urgency to take this to market,” he says.

When will this revolution arrive? “Sooner than you think,” Liu says.

Now we’re back where we began. Is Johnson a fool? Is he just wasting his time and fortune on a crazy dream? One thing is certain: Johnson will never stop trying to optimize the world. At the pristine modern house he rents in Venice Beach, he pours out idea after idea. He even took skepticism as helpful information—when I tell him his magic neuroprosthesis sounds like another version of the Mormon heaven, he’s delighted.

“Good point! I love it!”

He never has enough data. He even tries to suck up mine. What are my goals? My regrets? My pleasures? My doubts?

Every so often, he pauses to examine my “constraint program.”

“One, you have this biological disposition of curiosity. You want data. And when you consume that data, you apply boundaries of meaning-making.”

“Are you trying to hack me?” I ask.

Not at all, he says. He just wants us to share our algorithms. “That’s the fun in life,” he says, “this endless unraveling of the puzzle. And I think, ‘What if we could make the data transfer rate a thousand times faster? What if my consciousness is only seeing a fraction of reality? What kind of stories would we tell?’ ”

In his free time, Johnson is writing a book about taking control of human evolution and looking on the bright side of our mutant humanoid future. He brings this up every time I talk to him. For a long time I lumped this in with his dreamy ideas about reprogramming the operating system of the world: The future is coming faster than anyone thinks, our glorious digital future is calling, the singularity is so damn near that we should be cheering already—a spiel that always makes me want to hit him with a copy of the Unabomber Manifesto.

But his urgency today sounds different, so I press him on it: “How would you respond to Ted Kaczynski’s fears? The argument that technology is a cancerlike development that’s going to eat itself?”

“I would say he’s potentially on the wrong side of history.”

“Yeah? What about climate change?”

“That’s why I feel so driven,” he answered. “We’re in a race against time.”

He asks me for my opinion. I tell him I think he’ll still be working on cyborg brainiacs when the starving hordes of a ravaged planet destroy his lab looking for food—and for the first time, he reveals the distress behind his hope. The truth is, he has the same fear. The world has gotten way too complex, he says. The financial system is shaky, the population is aging, robots want our jobs, artificial intelligence is catching up, and climate change is coming fast. “It just feels out of control,” he says.

He’s invoked these dystopian ideas before, but only as a prelude to his sales pitch. This time he’s closer to pleading. “Why wouldn’t we embrace our own self-directed evolution? Why wouldn’t we just do everything we can to adapt faster?”

I turn to a more cheerful topic. If he ever does make a neuroprosthesis to revolutionize how we use our brain, which superpower would he give us first? Telepathy? Group minds? Instant kung fu?

He answers without hesitation. Because our thinking is so constrained by the familiar, he says, we can’t imagine a new world that isn’t just another version of the world we know. But we have to imagine something far better than that. So he’d try to make us more creative—that would put a new frame on everything.

Ambition like that can take you a long way. It can drive you to try to reach the South Pole when everyone says it’s impossible. It can take you up Mount Kilimanjaro when you’re close to dying and help you build an $800 million company by the time you’re 36. And Johnson’s ambitions drive straight for the heart of humanity’s most ancient dream: For operating system, substitute enlightenment.

By hacking our brains, he wants to make us one with everything.

https://www.wired.com/story/inside-the-race-to-build-a-brain-machine-interface/?mbid=nl_111717_editorsnote_list1_p1

by John H. Richardson

by John H. Richardson