By Richard Gray

Etched with strange pictograms, lines and wedge-shaped markings, they lay buried in the dusty desert earth of Iraq for thousands of years. The clay tablets left by the ancient Sumerians around 5,000 years ago provide what are thought to be the earliest written record of a long dead people.

Although it took decades for archaeologists to decipher the mysterious language preserved on the slabs, they have provided glimpses of what life was like at the dawn of civilisation.

Similar tablets and carved stones have been unearthed at the sites of other mighty cultures that have long since vanished – from the hieroglyphics of the Ancient Egyptians to the inscriptions of the Maya of Mesoamerica.

The stories and details they contain have stood the test of time, surviving through the millennia to be unearthed and deciphered by modern historians. But there are fears that future archaeologists may not benefit from the same sort of immutable record when they come to search for evidence of our own civilisation. We live in a digital world where information is stored as lists of tiny electronic ones and zeros that can be edited or even wiped clean by a few accidental strokes on a keyboard. “Unfortunately we live in an age that will leave hardly any written traces,” explained Martin Kunze.

Kunze’s solution is the Memory of Mankind project, a collaboration between academics, universities, newspapers and libraries to create a modern version of those first ancient Sumerian tablets discovered in the desert. Their plan is to gather together the accumulated knowledge of our time and store it underground in the caverns carved out in one of the oldest salt mines in the world, in the mountains of Austria’s picturesque Salzkammergut. “The main point of what we are doing is to store information in a way that it is readable in the future. It is a backup of our knowledge, our history and our stories,” says Kunze.

Creating a stone “time capsule” may seem archaic in the age where most of our knowledge now floats around the internet cloud, but a slide back into the technological dark ages is not beyond comprehension. The advent of the internet has seen people have more information at their fingertips than at any previous point in human history. Yet the huge repositories of knowledge we have built up are perilously vulnerable.

Ever more information is being stored digitally on remote computer servers and hard disks. How many of us have hard copies of the photographs we took on our last holiday, for example.

The situation gets more serious when we consider scientific papers that are now solely published online. Entire catalogues of video footage from news broadcasters, television and film are stored digitally. Official documents and government papers reside in digital libraries.

Yet a conference of space weather scientists, together with officials from Nasa and the US Government, earlier this year warned of the fragile nature of all this digital information. Charged particles thrown out by the sun in a powerful solar storm could trigger electromagnetic surges that could render our electronic devices useless and wipe data stored in memory drives.

Such storms are a real threat, and they happen relatively regularly. A report produced by the British Government last year highlighted that severe solar storms appear to happen every 100 years.

The last major coronal mass ejection to hit the Earth, known as the Carrington event, was in 1859 and is thought to have been the biggest in 500 years. It blew telegraph systems all over the world and pylons threw sparks. In the age of the internet, such an event would be catastrophic.

But there are other threats too – malicious hackers or even careless officials could tamper with these digital records or delete them altogether. And what if we simply lose the ability to read this information? Technology is changing so fast that media formats are quickly rendered obsolete. Minidiscs, VHS and the humble floppy disk have become outdated within decades.

Few computers even come with DVD drives now, while giving the current generation of teenagers a floppy disk would leave them flummoxed. If information is stored on one of these formats and the technology needed to access it disappears completely, then it could be lost forever.

Hence the desire to keep a hard copy of our most important documents. Unfortunately, even the more traditional forms of storing information are also unlikely to keep information safe for more than a few centuries. While we have some paper manuscripts that have survived for hundreds of years – and in the case of papyrus scrolls, for thousands – unless they are stored in the right conditions, most disintegrate to dust after a couple of hundred years. Newspaper can decompose within six weeks if it gets wet.

“It is very likely that in the long term the only traces of our present activities will be global warming, nuclear waste and Red Bull cans,” says Kunze. “The amount of data is inflating rapidly, so the real challenge becomes selecting what we want to keep for our grandchildren and those that come after them.”

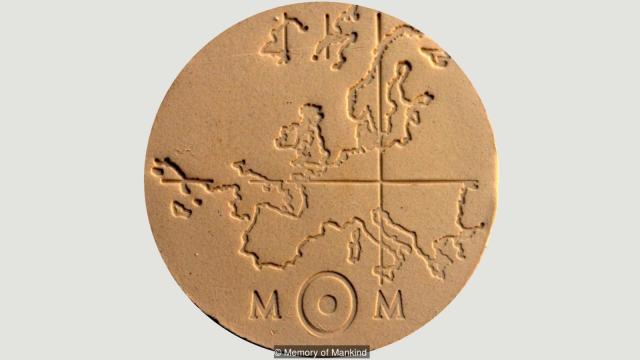

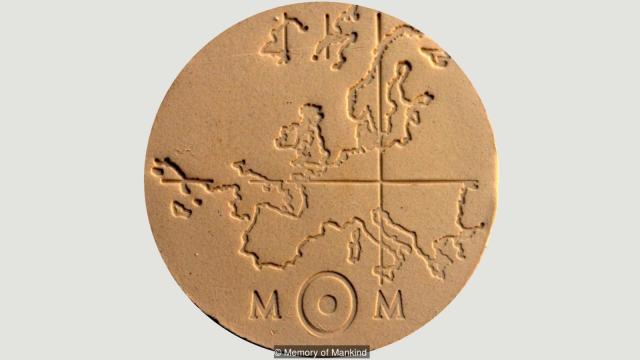

Which is why Kunze and his colleagues are instead looking further back in time for inspiration, to those Sumerian stone tablets. The Memory of Mankind team hopes to create an indelible record of our way of life by imprinting official documents, details about our culture, scientific papers, biographies, popular novels, news stories and even images onto square ceramic plates measuring eight inches (20cm) across.

This hinges on a special process that Kunze describes as “ceramic microfilm”, which he says is the most durable data storage system in the world. The flat ceramic plates are covered with a dark coating and a high energy laser is then used to write into them.

Each of these tablets can hold up to five million characters – about the same as a four-hundred-page book. They are acid- and alkali-resistant and can withstand temperatures of 1300C. A second type of tablet can carry colour pictures and diagrams along with 50,000 characters before being sealed with a transparent glaze.

The plates are then stacked inside ceramic boxes and tucked into the dark caverns of a salt mine in Hallstatt, Austria. As a resting place for what could be described as the ultimate time capsule, it is impressive. In the right light the walls still glisten with the remnants of salt, which extracts moisture and desiccates the air.

The salt itself has a Plasticine-like property that helps to seal fractures and cracks, keeping the tomb watertight. Buried beneath millions of tonnes of rock, the records will be able to survive for millennia and perhaps even entire ice ages, Kunze believes.

In some distant future after our own civilisation has vanished, they could prove invaluable to any who find them. They could help resurrect forgotten knowledge for cultures less advanced than our own, or provide a wealth of historical information for more advanced civilisations to ensure our own achievements, and our mistakes, can be learned from.

But it could also have value in the shorter term too.

“We are trying to create something that will not only be a collection of information for a distant future, but it will also be a gift for our grandchildren,” says Kunze. “Memory of Mankind can serve as a backup of knowledge in case of an event like war, a pandemic or a meteorite that throws us back centuries within two or three generations. A society can lose skills and knowledge very quickly – in the 6th Century, Europe largely lost the ability to read and write within three generations.”

Already the Memory of Mankind archive contains an eclectic glimpse of our society. Among the information etched into the ceramic plates are books summarising the history of individual countries around the world. Towns and villages have also opted to include their own local histories. A thousand of the world’s most important books – chosen by combining published lists using an algorithm developed by the University of Vienna – will be cut into the coating on the ceramic plates.

Museums are including images of precious objects in their collections along with descriptions of what we have learned about them. The Krumau Madonna – a sculpture dating to the late 14th Century currently sitting in the Museum of Art History in Vienna – is already there, along with paintings by the Baroque artists Peter Paul Rubens and Anthony van Dyck.

There are plates featuring pictures of fossils – dinosaurs, prehistoric fish and extinct ammonites – alongside a description of what we know about them. Even our current understanding of our own origins are included, with pictures of one of the earliest examples of sculpture ever found – the Venus of Willendorf.

Much of the material included on the tablets is in German, but there are tablets in English, French and other languages.

A handful of celebrities have also found themselves immortalised in the salt-lined vaults. Baywatch star and singer David Hasselhoff has a particularly lengthy entry as does German singer Nena who had a hit with 99 Red Balloons in the 1980s. Nestled among them is a plate detailing the story of Edward Snowden and his leak of classified material from the US National Security Agency.

The University of Vienna has been placing prize winning PhD dissertations and scientific papers onto the tablets. Included in the archive are plates describing genetic modification and bioengineering patents, explaining what today’s scientists have achieved and how they managed it.

And alongside research, everyday objects like washing machines, smartphones and televisions are also being documented as a record of what life is like today.

The plates also serve as a warning for future generations – with sites of nuclear waste dumps pinpointed so future generations might know to avoid them or to clean them up if they have the technology. Newspapers have been asked to send their daily editorials to provide a repository of opinions as well as facts.

In many ways, the real problem is what not to include. “We probably have about 0.1% of the antique literature yet in the modern world publishing is as easy as posting something on the internet or sending a tweet,” explains Kunze. “Publications about science, space flight and medicine – the things we really spend money on – drown in the mass of data we produce. The Large Hadron Collider produces something like 30 Petabytes of data a year, but this is equal to just 0.003% of annual internet traffic. “A random fragment of 0.1% of our present day data will result in a very distorted view of our time.”

To tackle this, Kunze and his colleagues are organising a conference in November next year to bring scientists, historians, archaeologists, linguists and philosophers together to create a blueprint for selecting content for the project. The team also hope to immortalise glimpses of mundane, everyday life as members of the public are encouraged to create tablets of their own. “We are saving cooking recipes and stories of love and personal events,” adds Kunze. “On one plate, a little girl has included three photographs of her confirmation and written a short bit of text about it. They give a glimpse of everyday life that will be very valuable.”

Preserved tweets

Memory of Mankind is not the only project to face the daunting task of preserving humanity’s accumulated knowledge. Librarians around the world are also looking at the knotty problem of how to save the information from the modern age.

The University of California Los Angeles, for instance, is archiving tweets related to major events and preserving them in their own archives. “We are collecting tweets from Cairo on the day of the January 25th revolution for example,” explained Todd Grappone, associate university librarian. “We are then translating them into multiple languages and saving them in file formats that are likely to be robust for the future. We are only doing it digitally at the moment as we have something like 1,000 cellphone videos from that event alone, but the value of that is enormous.”

Another project, called the Human Document Project, is aiming to record information on wafers of tungsten/silicon nitride. Initially they have been etching them with dozens of tiny QR codes – a type of two-dimensional barcode – which can be read using smartphones, but they say the final disks will hold information written in a form that can be read using a microscope.

Leon Abelmann, a researcher at Twente University in Enschede, the Netherlands, is one of the driving forces behind the project. He says that they are hoping to produce something that will be able to survive for one million years and are now starting to collaborate with the Memory of Mankind. “We would be really happy if we found information left for us by an intelligence that has already been extinct for a million years,” he said. “So we think future intelligent beings will be too. The mere fact that we need to take a helicopter view of ourselves will hopefully make us realise that the differences between us are trivial.”

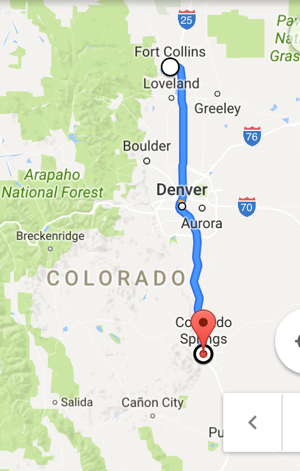

Buried under a mountain, it may seem unlikely that any future generations would be able to find these tablets. For this reason, Memory of Mankind will has engraved some small tokens with a map pinpointing the archives’ location, which they will then bury at strategic places around the world. Other tokens are being entrusted to 50 holders who will pass them onto the next generation.

To ensure those who do find it can actually read what is in there, the Memory of Mankind team has been creating their own Rosetta Stone – thousands of images labelled with their names and meanings.

All of which gives a hint at the ambition of what they are trying to do. The individuals who unearth this gold-mine of knowledge could be very different from our own. In a few thousand years civilisation may have advanced beyond our reckoning or descended back to the dark ages. Perhaps it will not even be humans who end up uncovering our memories. “We could be looking at some other form of intelligent life,” adds Kunze.

We will never know what those future archaeologists will make of our civilisation when they wipe the dust away from the tablets in thousands of years’ time, but we can hope that like the ancient Sumarians, we will not be forgotten.

http://www.bbc.com/future/story/20161018-the-worlds-knowledge-is-being-buried-in-a-salt-mine

Thanks to Kebmodee for bringing this to the It’s Interesting community.